Article first appeared here

Introduction

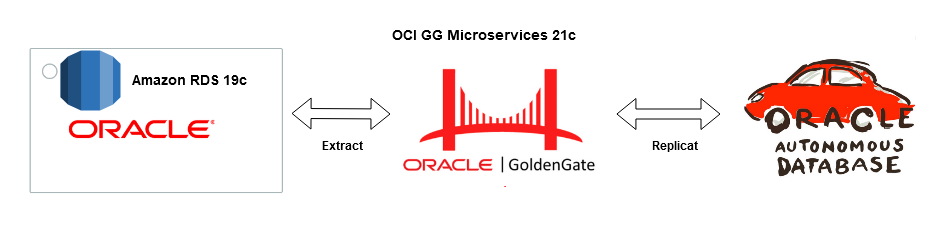

Goldengate Microservices 21c is the latest version of the microservices architecture which makes creating data mesh and data fabric across different public clouds as easy as a few clicks. Goldengate is available on OCI as a fully managed service with auto-scaling. It does not.require installation of Goldengate software at either the source or Target db instances. Goldengate uses a capture and apply mechanism for replication using trail files. Both the extract (capture) and replicat (apply) processes run on the Goldengate replication instance which acts as a hub.

Let us go ahead and create a data pipeline for replicating Data in real-time using Oracle Cloud Infrastructure (OCI) Goldengate 21c from Amazon RDS Oracle Instance to an Oracle Autonomous database in OCI. Below are some of the common use cases for this solution :

Use Cases

- Cross-cloud replication of Oracle Database from AWS RDS to OCI

- Migration of Oracle Database with Zero Downtime from AWS RDS to OCI

- Creating Multi-Cloud Microservices Application with Oracle database as the persistent data store

- Creating a Multi-cloud Data Mesh for Oracle Database

Architecture

Source : Amazon RDS Oracle 19c EE

Target : OCI Autonomous Transaction Processing 19c

Replication Hub : OCI Goldengate 21c Microservices

Network : Site-to Site IPsec VPN or Fastconnect (Direct Connect on AWS)

The solution is broadly divided into four phases :

- Setup of RDS Instance and Preparing Source for Goldengate replication

- Setup of OCI Autonomous Database and Preparing Target for Goldengate Replication

- Deployment of OCI Goldengate and Creation of Deployment and Register Source and Target Databases

- Create Extract (Capture) and Replicate (Apply) process on OCI Goldengate

Phase 1 — AWS Setup : RDS Source and Enable Goldengate Capture

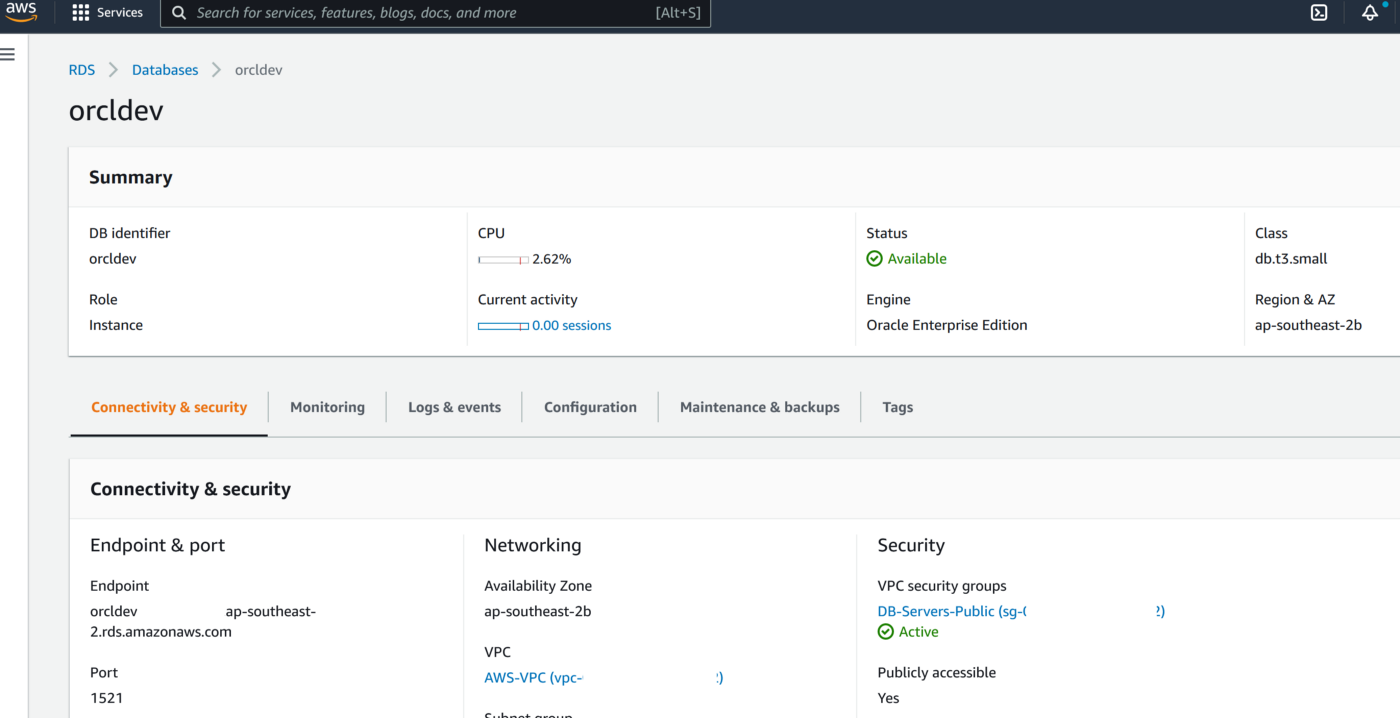

The first part of the setup requires us to provision a VPC, Subnet Group and Oracle 19c RDS Instance on AWS. Please ensure all the requistie Network constructs like security groups are in place for connectivity from OCI Goldengate to RDS. In a production scenario it would be betetr to have the RDS instance without a public endpoint and have a Fastconnect setup from AWS to OCI

- Create a VPC and RDS Subnet Group

2. Create RDS Oracle Instance 19.1 EE with super user as ‘admin’

3. Create a new DB Parameter Group for 19.1 EE with parameter ENABLE_GOLDENGATE_REPLICATION set to TRUE

4. Change the parameter group of the RDS instance and reboot the RDS Oracle instance once the parameter group has been applied. Double-check to confirm the parameter ENABLE_GOLDENGATE_REPLICATION is set to True and the correct parameter group is applied to the RDS isntance

5. Set the log retention period on the source DB with ‘admin’ user

exec rdsadmin.rdsadmin_util.set_configuration('archivelog retention hours',24);commit;

6. Create a new user account to be used for Goldengateon the RDS instance with ‘admin’ user

CREATE TABLESPACE administrator;

CREATE USER oggadm1 IDENTIFIED BY “*********” DEFAULT TABLESPACE ADMINISTRATOR TEMPORARY TABLESPACE TEMP;

commit;

7. Grant account privileges on the source RDS instance with ‘admin’ user

GRANT CREATE SESSION, ALTER SESSION TO oggadm1;

GRANT RESOURCE TO oggadm1;

GRANT SELECT ANY DICTIONARY TO oggadm1;

GRANT FLASHBACK ANY TABLE TO oggadm1;

GRANT SELECT ANY TABLE TO oggadm1;

GRANT SELECT_CATALOG_ROLE TO admin WITH ADMIN OPTION;

exec rdsadmin.rdsadmin_util.grant_sys_object (‘DBA_CLUSTERS’, ‘OGGADM1’);

exec rdsadmin.rdsadmin_util.grant_sys_object (‘DBA_CLUSTERS’, ‘ADMIN’);

GRANT EXECUTE ON DBMS_FLASHBACK TO oggadm1;

GRANT SELECT ON SYS.V_$DATABASE TO oggadm1;

GRANT ALTER ANY TABLE TO oggadm1;

grant unlimited tablespace TO oggadm1;

grant EXECUTE_CATALOG_ROLE to admin WITH ADMIN OPTION;

commit;

8. Finally, grant the privileges needed by a user account to be a GoldenGate administrator. The package that you use to perform the grant, dbms_goldengate_auth or rdsadmin_dbms_goldengate_auth, depends on the Oracle DB engine version.

— With admin user on RDS Oracle instance for Oracle Database version lower than 12.2 —

exec dbms_goldengate_auth.grant_admin_privilege (grantee=>’OGGADM1′,privilege_type=>’capture’,grant_select_privileges=>true, do_grants=>TRUE);

exec dbms_goldengate_auth.grant_admin_privilege(‘OGGADM1′,container=>’all’);

exec dbms_goldengate_auth.grant_admin_privilege(‘OGGADM1’);

commit;

— For Oracle DB versions that are later than or equal to Oracle Database 12c Release 2 (12.2), which requires patch level 12.2.0.1.ru-2019–04.rur-2019–04.r1 or later, run the following PL/SQL program.

exec rdsadmin.rdsadmin_dbms_goldengate_auth.grant_admin_privilege (grantee=>’OGGADM1′, privilege_type=>’capture’,grant_select_privileges=>true, do_grants=>TRUE);

commit;

To revoke privileges, use the procedure revoke_admin_privilege in the same package.

9. TNS entry for AWS RDS Instance

OGGTARGET=(DESCRIPTION=(ENABLE=BROKEN)(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=orcl.*****.ap-southeast-2.rds.amazonaws.com)(PORT=1521)))(CONNECT_DATA=(SID=ORCL)))– To be added to Registered Database in OCI –(DESCRIPTION=(ENABLE=BROKEN)(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=orcl.****.ap-southeast-2.rds.amazonaws.com)(PORT=1521)))(CONNECT_DATA=(SID=ORCL)))

Alias (to be used later in OCI GG configuration) : ORCLAWS

10. Create Test Table in RDS Oracle Instance

CREATE TABLE oggadm1.test (id number,name varchar2(100));

insert into oggadm1.test values (1,’Shadab’);

insert into oggadm1.test values (2,’Mohammad’);

commit;

11. Enable supplemental logging on with Admin user

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

/

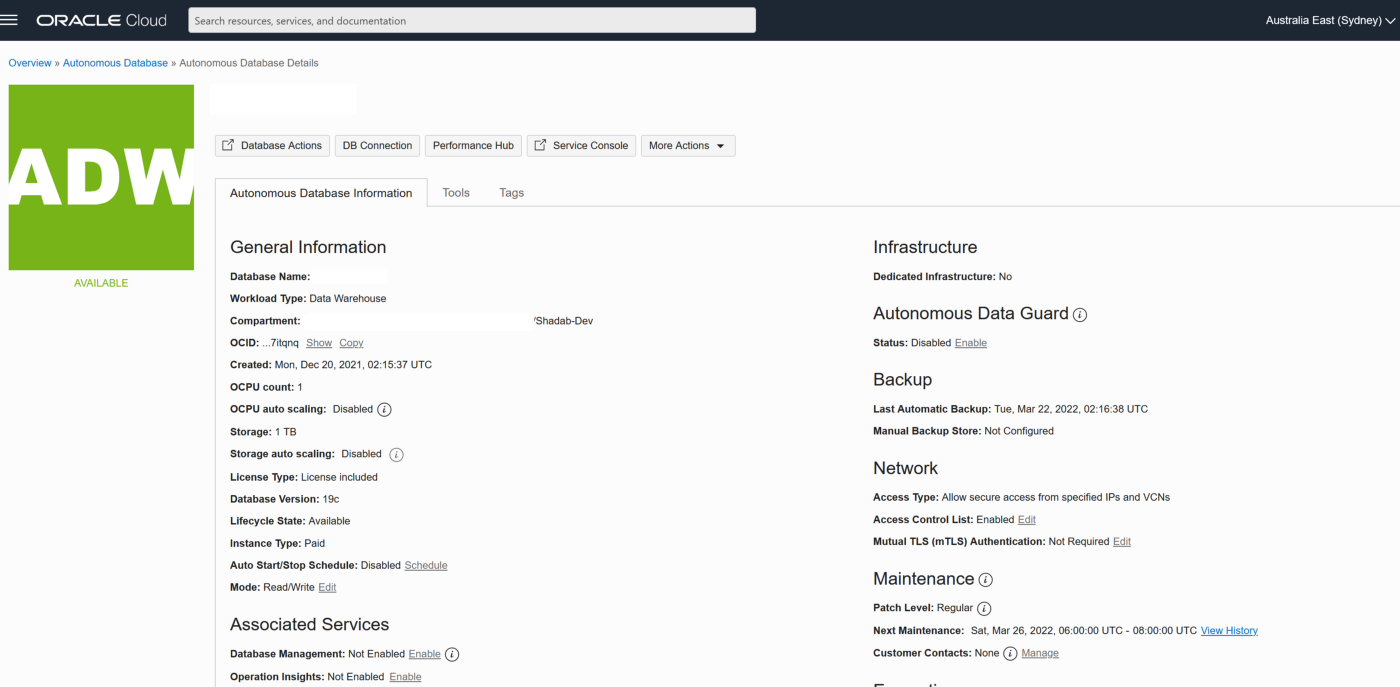

Phase 2 — OCI Setup : Autonomous Database

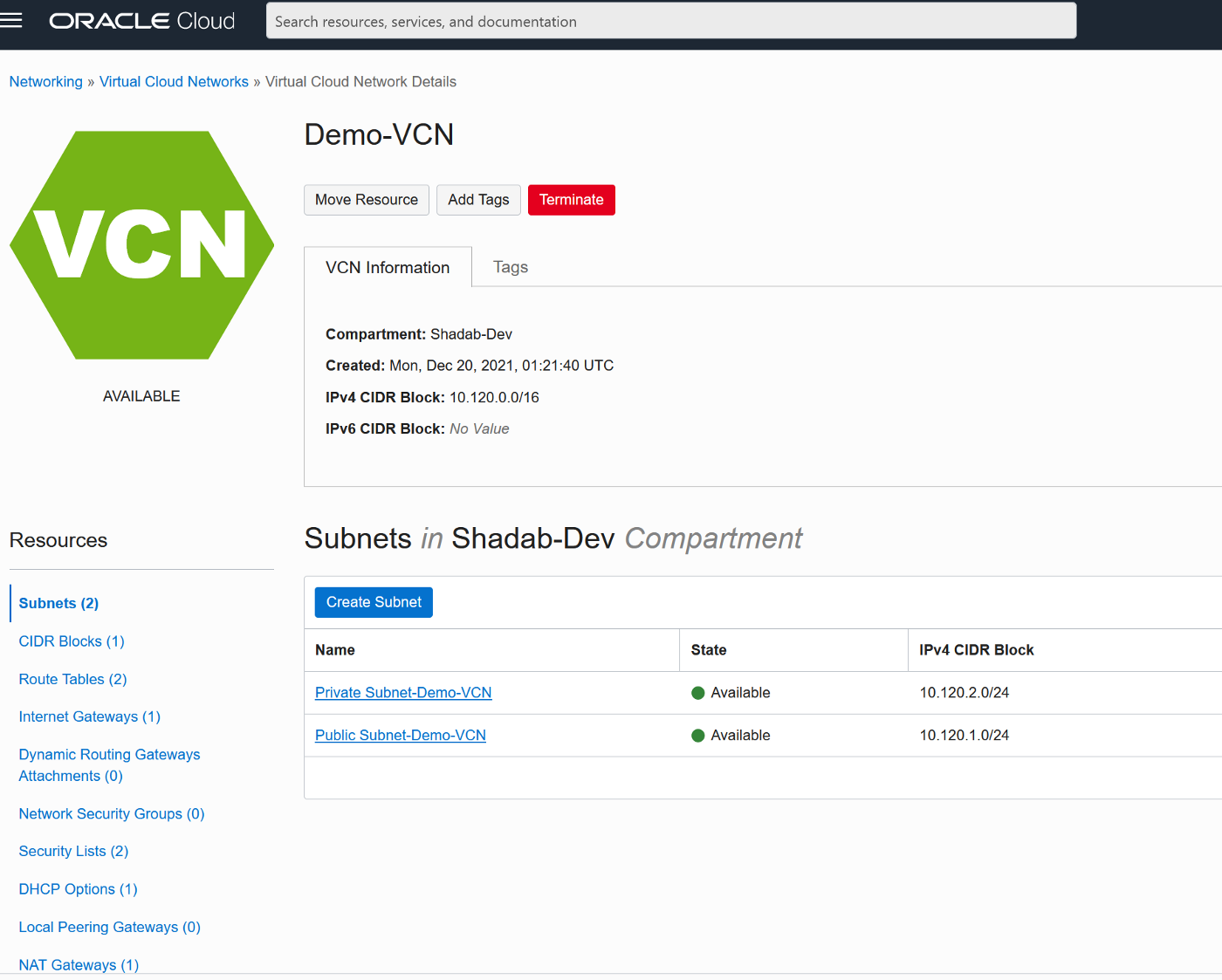

We will provision the VCN, Autonomous Database on OCI and enable the goldengate replication user

- Create VCN

2. Create Autonomous Transaction Processing Database with Network Options and mTLS not required

3. Unlock ggadmin user in the ATP

alter user ggadmin identified by ****** account unlock;

4. Create Table ‘test’ in admin schema and do initial load (Normally this has to be done using data pump but it is beyond the scope of this article)

CREATE TABLE test (id number,name varchar2(100));

insert into test values (1,’Shadab’);

insert into test values (2,’Mohammad’);

commit;

select * from test;

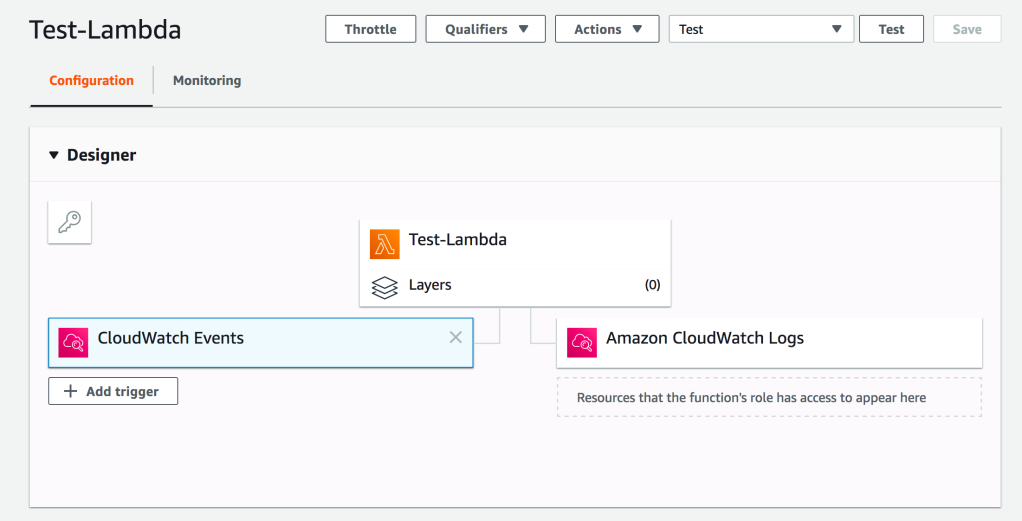

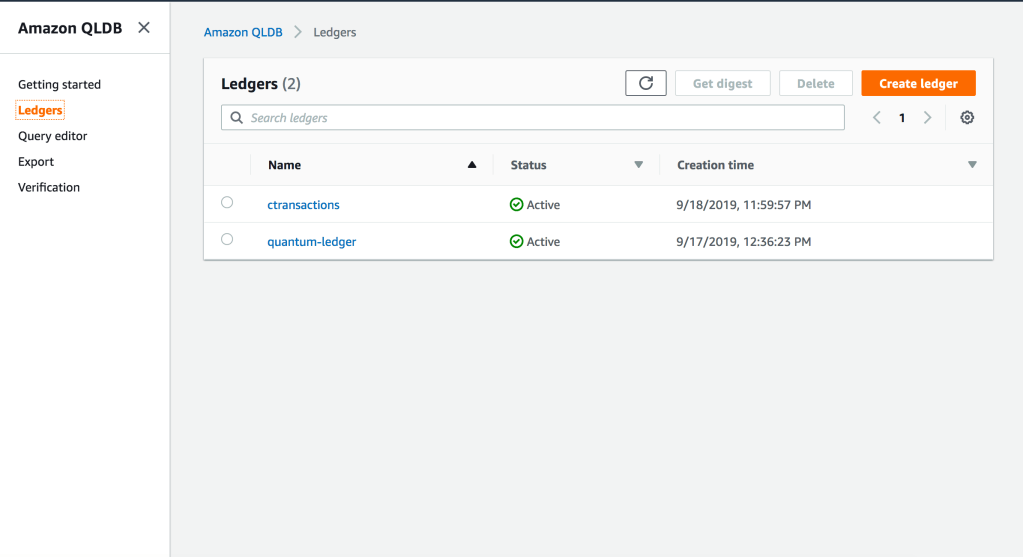

Phase 3 — OCI Setup : Goldengate

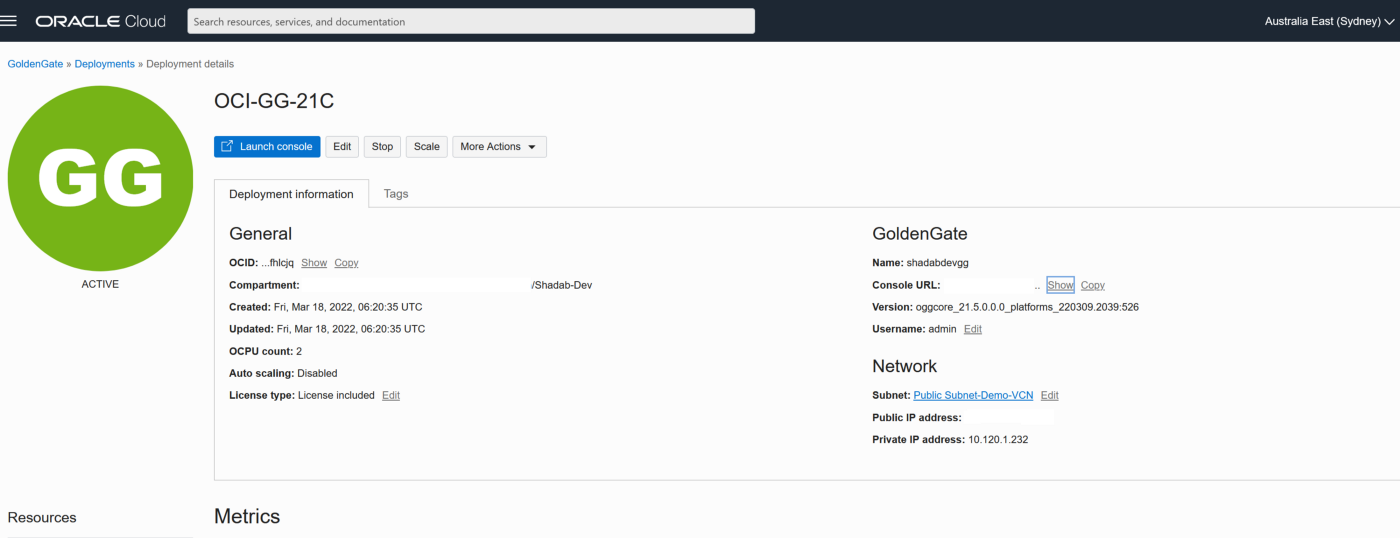

- Go to OCI Console Go to Oracle Database > Goldengate > Deployments > Create Deployment

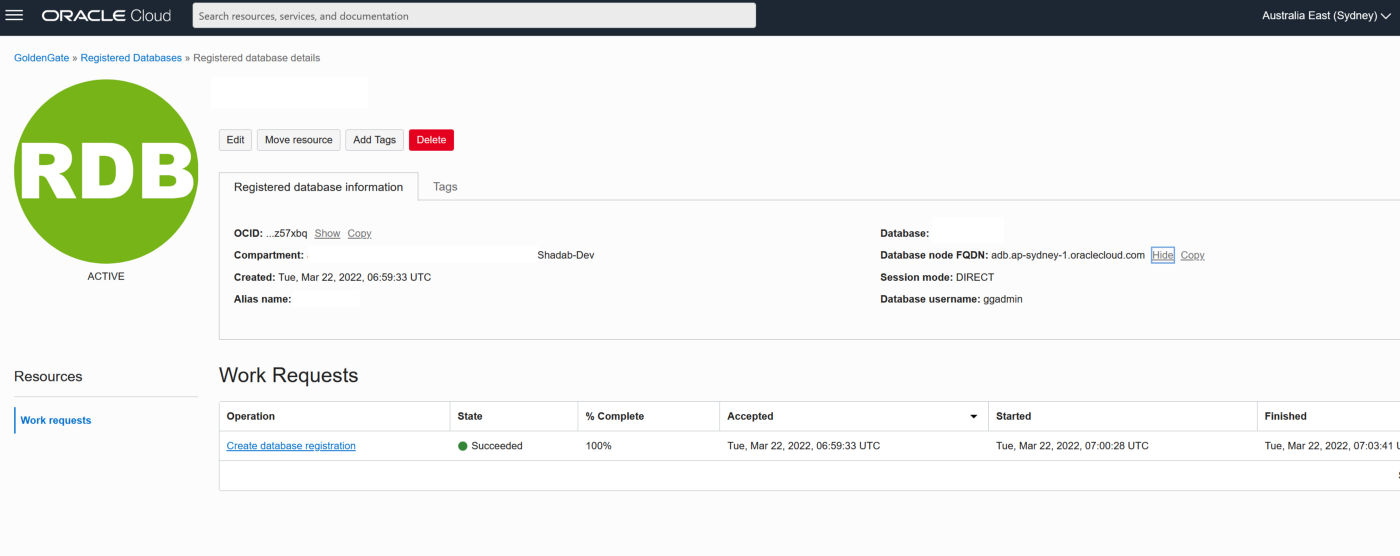

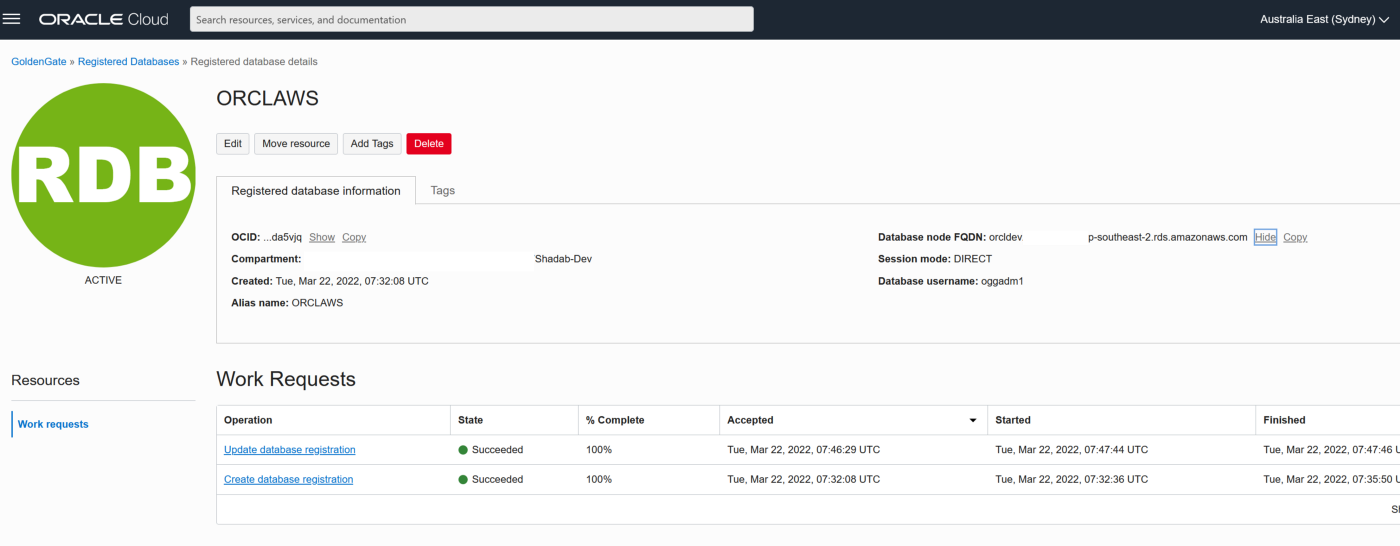

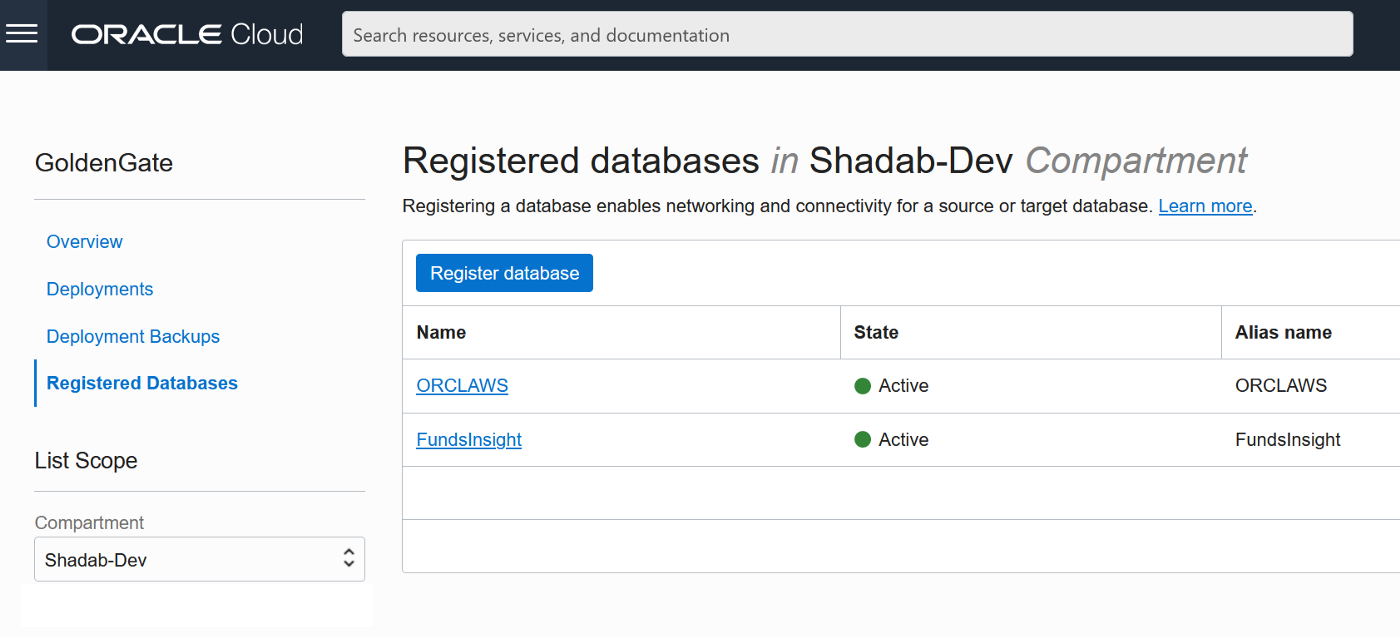

2. Go to Oracle Database > Goldengate > Registered Databases

a. Add the ATP database created above with the ggadmin user

b. Add the RDS instance database using oggadm1 user

3. Test the connectivity to both databases , it should in console as Active

4. Go the launch URL for the Goldengate deployment username and password as per step 1.

eg : https://e*******q.deployment.goldengate.ap-sydney-1.oci.oraclecloud.com/

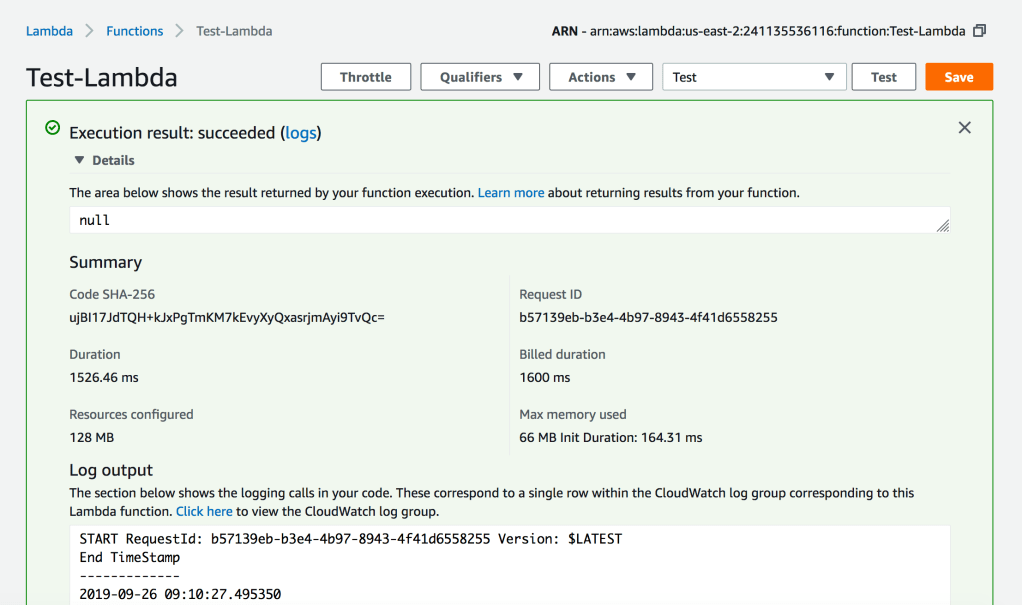

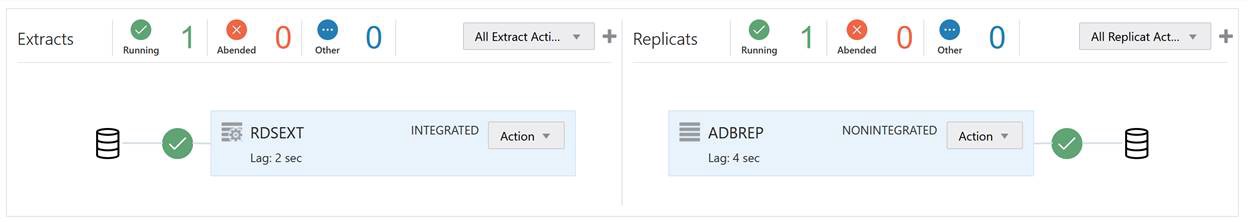

Phase 4 — Create , Extract (Capture) and Replicat (Apply) and Start the Replication

1. Create an Integrated Extract from Administration Service, click on the plus symbol next to the extract section

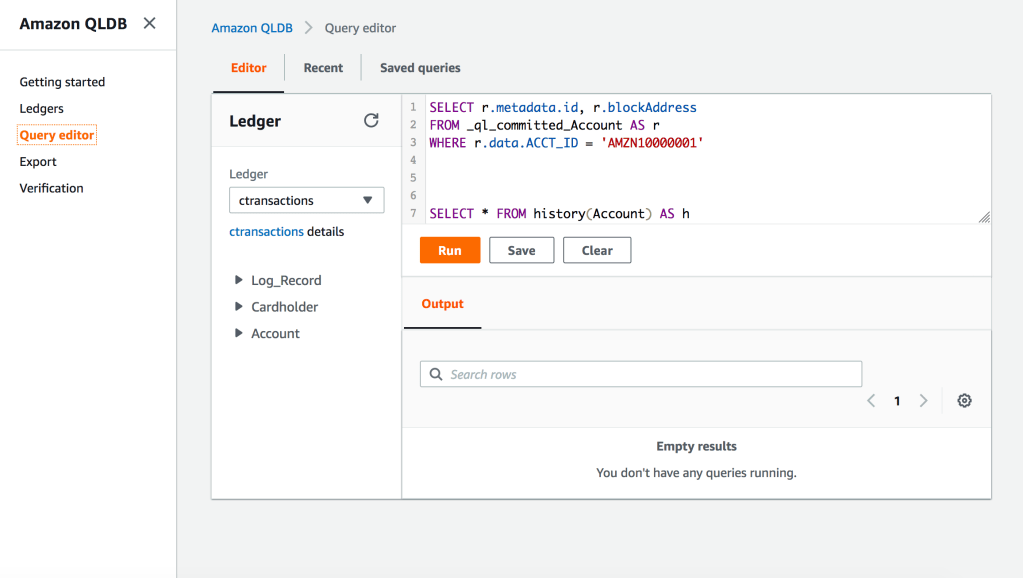

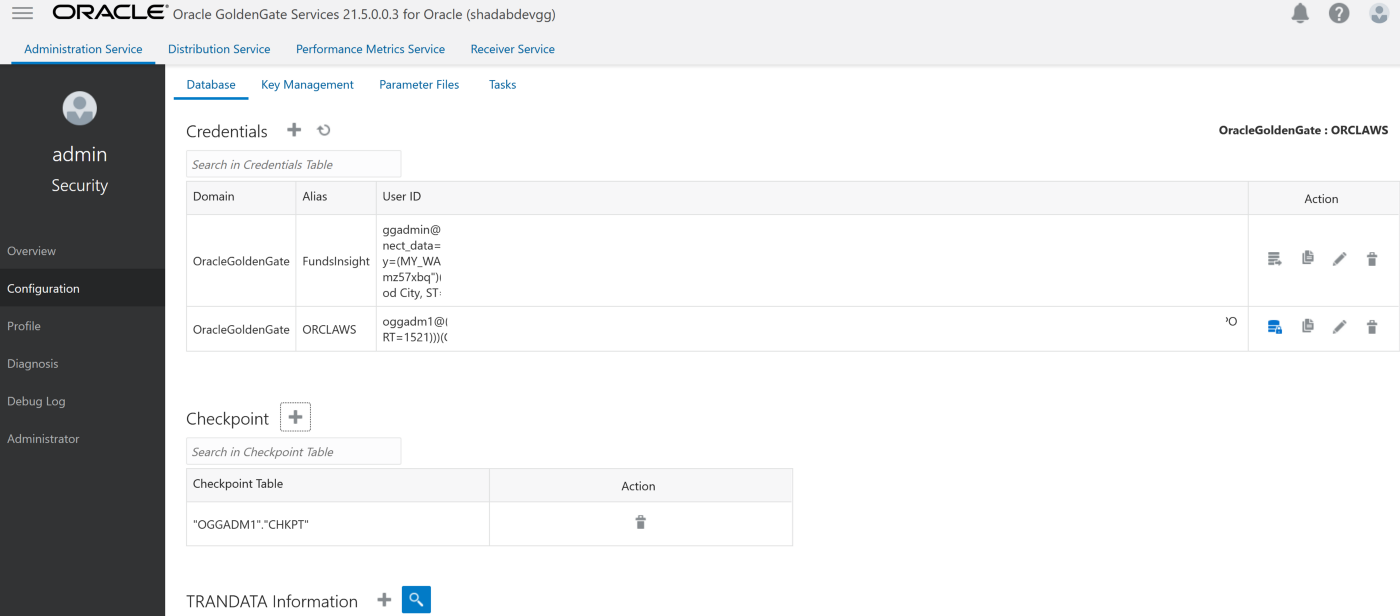

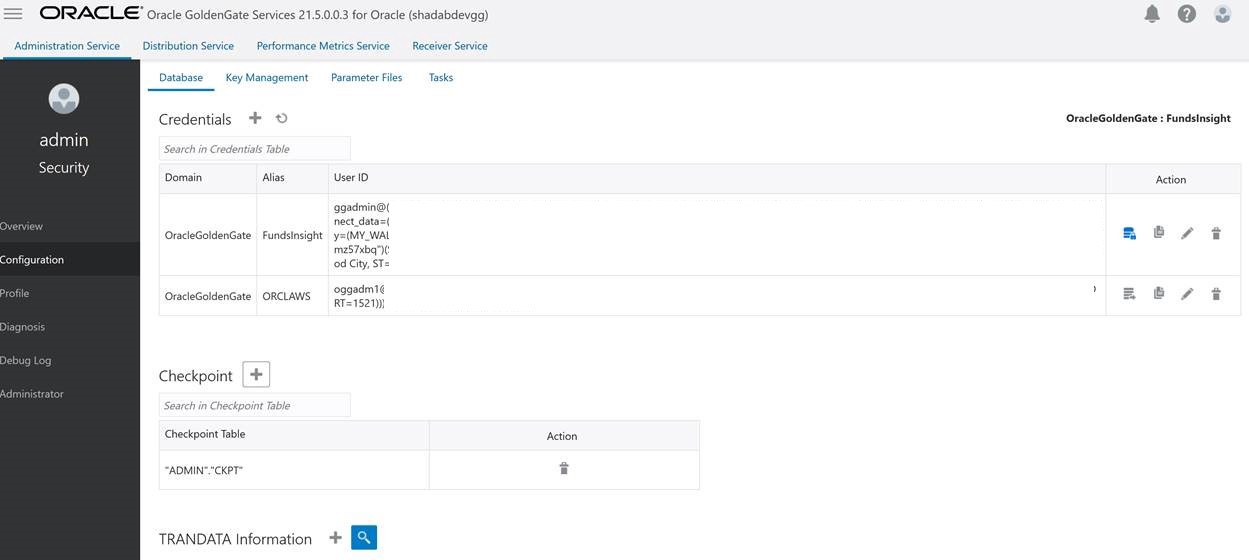

Go to Main Page > Configuration > Login to AWS RDS instance

a. Create Checkpoint table oggadm1.ckpt

b. Add Tran Data for Schema oggadm1

EXTRACT AWSEXT

USERIDALIAS ORCLAWS DOMAIN OracleGoldenGate

EXTTRAIL AW

TABLE OGGADM1.*;

2. Create Non-integrated replicat for ADB on trail file ‘aw’. click on the plus symbol next to the Replicat section

Go to Main Page > Configuration > Login to ATP instance

a. Create Checkpoint table admin.ckpt

b. Add Tran Data for Schema admin

c. Add heartbeat table

REPLICAT adbrep

USERIDALIAS FundsInsight DOMAIN OracleGoldenGate

MAP OGGADM1.TEST, TARGET ADMIN.TEST;

The status should be green on the OCI Goldengate Administration Dashboard

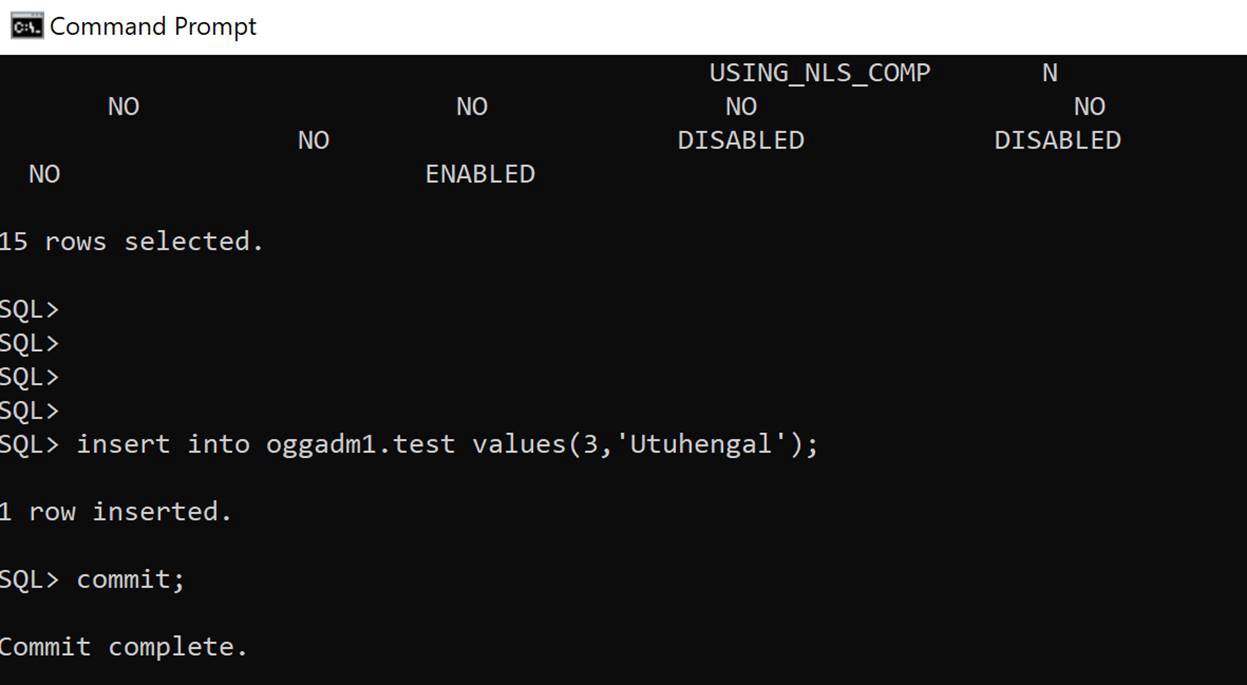

3. Insert transaction at RDS source

insert into oggadm1.test values(3,'Utuhengal');commit;

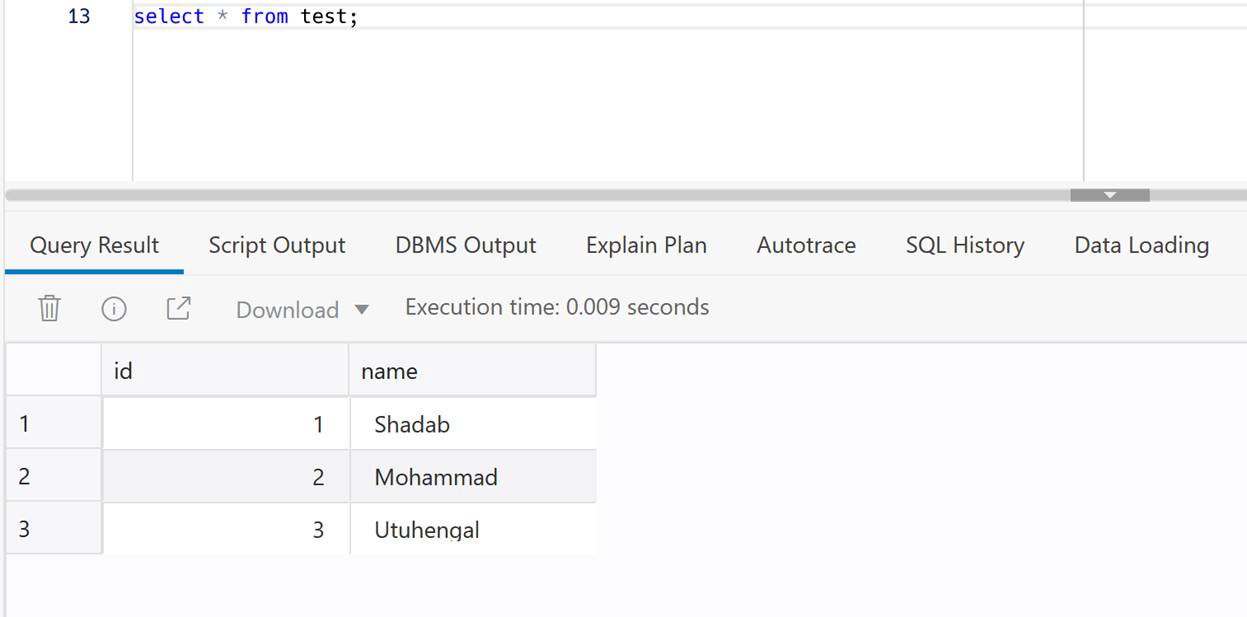

4. Check at ADB Target

select * from test;

Conclusion:

We have created cross-cloud replication from an Oracle Database sitting inside AWS to an Oracle Autonomous Database running on OCI. The idea was to demonstrate the capability and ease of Goldengate Microservices to run a a replication hub on OCI and let you create real-time change data capture across two different public clouds. Every component used in this architecture is a fully managed service without the need of managing any servers or installing any agents on either source or target as they are fully managed cloud services without access to under-lying host.

References:

- Setup of Goldengate for RDS : https://jinyuwang.weebly.com/cloud-service/how-to-capture-data-from-oracle-database-on-aws-rds-with-oracle-goldengate

- Goldengate Setup for RDS Source :https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/Appendix.OracleGoldenGate.html#Appendix.OracleGoldenGate.rds-source-ec2-hub

- RDS Common Tasks :https://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/Appendix.Oracle.CommonDBATasks.Log.html

- OCI Goldengate Database Registration : https://docs.oracle.com/en/cloud/paas/goldengate-service/using/database-registrations.html#GUID-899B90FF-DF9A-481D-A531-BB9D25005EB9

- Apex Livelab for OCI Goldengate Microservices 21c :https://apexapps.oracle.com/pls/apex/dbpm/r/livelabs/workshop-attendee-2?p210_workshop_id=797&p210_type=3&session=113817274271778

- OCI Goldengate Blog : https://blogs.oracle.com/dataintegration/post/new-oci-goldengate-service-is-first-of-any-major-cloud-provider-to-deliver-operational-and-analytic-integration-into-a-single-data-fabric

- Getting Started with Goldengate : https://docs.oracle.com/goldengate/c1230/gg-winux/GGCON/getting-started-oracle-goldengate.htm#GGCON-GUID-61088509-F951-4737-AE06-29DAEAD01C0C