In this tutorial we will create a Python script which will build a data pipeline to load data from Aurora MySQL RDS to an S3 bucket and copy that data to a Redshift cluster.

One of the assumptions is you have basic understanding of AWS, RDS, MySQL, S3, Python and Redshift. Even if you don’t it’s alright I will explain briefly about each of them to the non-cloud DBA’s

AWS- Amazon Web Services. It is the cloud infrastructure platform from Amazon which can be used to build and host anything from a static website to a globally scalable service like Netflix

RDS – Relational Database Service or RDS or short is Amazons managed relational database service for databases like it’s own Aurora, MySQL, Postgres, Oracle and SQL Server

S3- Simple Storage Service is AWS’s distributed storage which can scale almost infinitely. Data in S3 is stored in Buckets. Think of buckets as Directories but DNS name compliant and cloud hosted

Python – A programming language which is now the defacto standard for data science and engineering

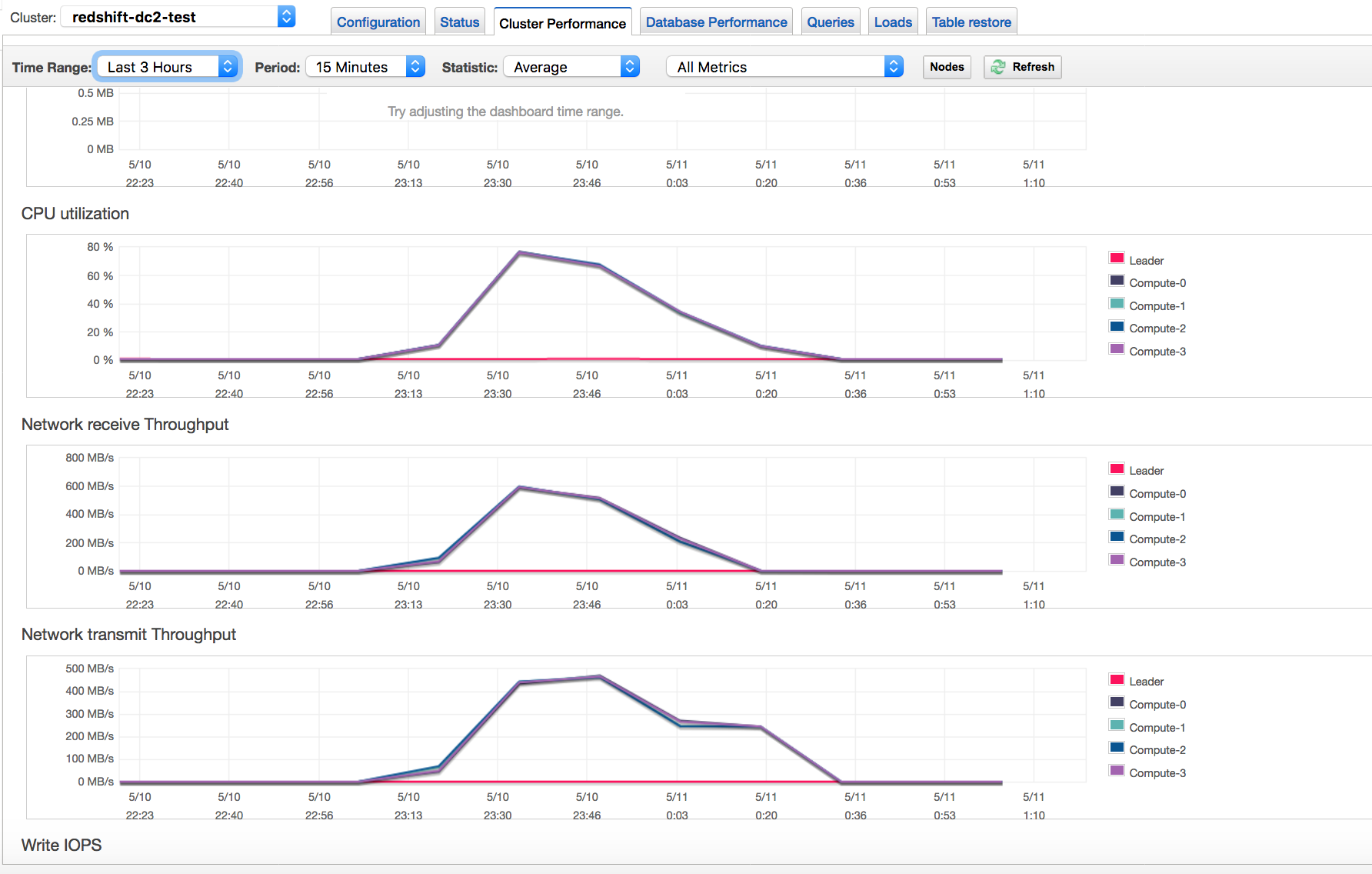

Redshift- AWS’s Petabyte scale Data warehouse which is binary compatible to PostgreSQL but uses a columnar storage engine

The source in this tutorial is a RDS Aurora MySQL database and target is a Redshift cluster. The data is staged in an S3 bucket. With Aurora MySQL you can unload data directly to a S3 bucket but in my script I will offload the table to a local filesystem and then copy it to the S3 bucket. This will give you flexibility in-case you are not using Aurora but a standard MySQL or Maria DB

Environment:

- Python 3.7.2 with pip

- Ec2 instance with the Python 3.7 installed along with all the Python packages

- Source DB- RDS Aurora MySQL 5.6 compatible

- Destination DB – Redshift Cluster

- Database : Dev , Table : employee in both databases which will be used for the data transfer

- S3 bucket for staging the data

- AWS Python SDK Boto3

Make sure both the RDS Aurora MySQL and Redshift cluster has security groups which have have IP of the Ec2 instance for inbound connections (Host and Port)

- Create the table ’employee’ in both the Aurora and Redshift Clusters

Aurora MySQL 5.6

CREATE TABLE `employee` (

`id` int(11) NOT NULL,

`first_name` varchar(45) DEFAULT NULL,

`last_name` varchar(45) DEFAULT NULL,

`phone_number` varchar(45) DEFAULT NULL,

`address` varchar(200) DEFAULT NULL,

PRIMARY KEY (`id`)

) ENGINE=InnoDB DEFAULT CHARSET=latin1;

Redshift

DROP TABLE IF EXISTS employee CASCADE;

CREATE TABLE employee

(

id bigint NOT NULL,

first_name varchar(45),

last_name varchar(45),

phone_number bigint,

address varchar(200)

);

ALTER TABLE employee

ADD CONSTRAINT employee_pkey

PRIMARY KEY (id);

COMMIT;

2. Install Python 3.7.2 and install all the packages needed by the script

sudo /usr/local/bin/python3.7 -m pip install boto3

sudo /usr/local/bin/python3.7 -m pip install psycopg2-binary

sudo /usr/local/bin/python3.7 -m pip install pymysql

sudo /usr/local/bin/python3.7 -m pip install json

sudo /usr/local/bin/python3.7 -m pip install pymongo

3. Insert sample data into the source RDS Aurora DB

$ mysql -u awsuser -h shadmha-cls-aurora.ap-southeast-2.rds.amazonaws.com -p dev

INSERT INTO `employee` VALUES (1,'shadab','mohammad','04447910733','Randwick'),(2,'kris','joy','07761288888','Liverpool'),(3,'trish','harris','07766166166','Freshwater'),(4,'john','doe','08282828282','Newtown'),(5,'mary','jane','02535533737','St. Leonards'),(6,'sam','rockwell','06625255252','Manchester');

SELECT * FROM employee;

4. Download and Configure AWS command line interface

The AWS Python SDK boto3 requires AWS CLI for the credentials to connect to your AWS account. Also for uploading the file to S3 we need boto3 functions. Install AWS CLI on Linux and configure it.

$ aws configure

AWS Access Key ID [****************YGDA]:

AWS Secret Access Key [****************hgma]:

Default region name [ap-southeast-2]:

Default output format [json]:

5. Python Script to execute the Data Pipeline (datapipeline.py)

import boto3

import psycopg2

import pymysql

import csv

import time

import sys

import os

import datetime

from datetime import date

datetime_object = datetime.datetime.now()

print ("###### Data Pipeline from Aurora MySQL to S3 to Redshift ######")

print ("")

print ("Start TimeStamp")

print ("---------------")

print(datetime_object)

print ("")

# Connect to MySQL Aurora and Download Table as CSV File

db_opts = {

'user': 'awsuser',

'password': '******',

'host': 'shadmha-cls-aurora.ap-southeast-2.rds.amazonaws.com',

'database': 'dev'

}

db = pymysql.connect(**db_opts)

cur = db.cursor()

sql = 'SELECT * from employee'

csv_file_path = '/home/centos/my_csv_file.csv'

try:

cur.execute(sql)

rows = cur.fetchall()

finally:

db.close()

# Continue only if there are rows returned.

if rows:

# New empty list called 'result'. This will be written to a file.

result = list()

# The row name is the first entry for each entity in the description tuple.

column_names = list()

for i in cur.description:

column_names.append(i[0])

result.append(column_names)

for row in rows:

result.append(row)

# Write result to file.

with open(csv_file_path, 'w', newline='') as csvfile:

csvwriter = csv.writer(csvfile, delimiter='|', quotechar='"', quoting=csv.QUOTE_MINIMAL)

for row in result:

csvwriter.writerow(row)

else:

sys.exit("No rows found for query: {}".format(sql))

# Upload Generated CSV File to S3 Bucket

s3 = boto3.resource('s3')

bucket = s3.Bucket('mybucket-shadmha')

s3.Object('mybucket-shadmha', 'my_csv_file.csv').put(Body=open('/home/centos/my_csv_file.csv', 'rb'))

#Obtaining the connection to RedShift

con=psycopg2.connect(dbname= 'dev', host='redshift-cluster-1.ap-southeast-2.redshift.amazonaws.com',

port= '5439', user= 'awsuser', password= '*********')

#Copy Command as Variable

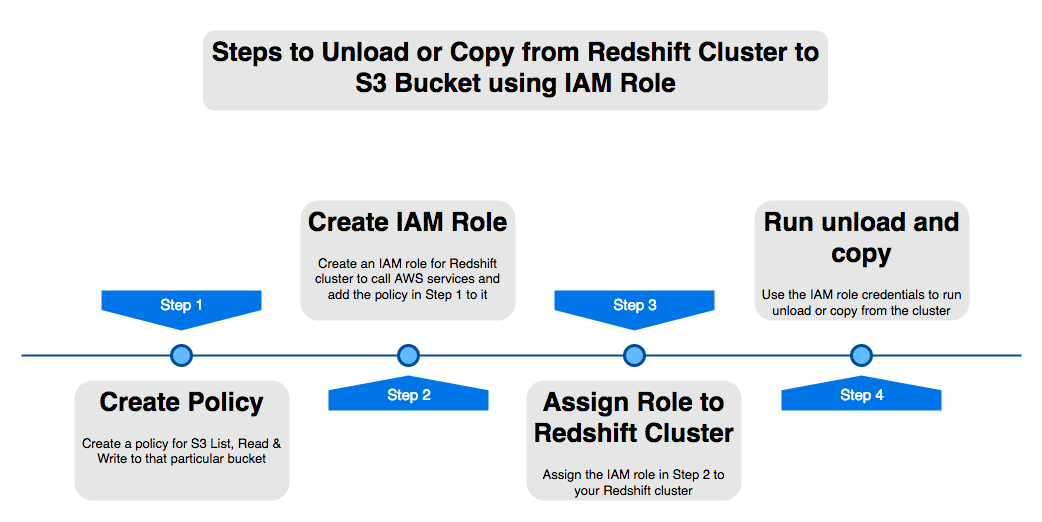

copy_command="copy employee from 's3://mybucket-shadmha/my_csv_file.csv' credentials 'aws_iam_role=arn:aws:iam::775888:role/REDSHIFT' delimiter '|' region 'ap-southeast-2' ignoreheader 1 removequotes ;"

#Opening a cursor and run copy query

cur = con.cursor()

cur.execute("truncate table employee;")

cur.execute(copy_command)

con.commit()

#Close the cursor and the connection

cur.close()

con.close()

# Remove the S3 bucket file and also the local file

DelLocalFile = 'aws s3 rm s3://mybucket-shadmha/my_csv_file.csv --quiet'

DelS3File = 'rm /home/centos/my_csv_file.csv'

os.system(DelLocalFile)

os.system(DelS3File)

datetime_object_2 = datetime.datetime.now()

print ("End TimeStamp")

print ("-------------")

print (datetime_object_2)

print ("")

6. Run the Script or Schedule in Crontab as a Job

$ python3.7 datapipeline.py

Crontab to execute Job daily at 10:30 am

30 10 * * * /usr/local/bin/python3.7 /home/centos/datapipeline.py &>> /tmp/datapipeline.log

7. Check the table in destination Redshift Cluster and all the records should be visible their

This tutorial was done using a small table and very minimum data. But with S3’s distributed nature and massive scale and Redshift as a Data warehouse you can build data pipelines for very large datasets. Redhsift being an OLAP database and Aurora OLTP, many real-life scenarios requires offloading data from your OLTP apps to data warehouses or data marts to perform Analytics on it.

AWS also has an excellent managed solution called Data Pipelines which can automate the movement and transform of Data. But many a times for developing customized solutions Python is the best tool for the job.

Enjoy this script and please let me know in your comments or on Twitter (@easyoradba) if you have any issues or what else would you like me to post for data engineering.