Github link for MCP Server : https://github.com/shadabshaukat/postgres-mcp-server/

Helping Enterprise DBA's Transition to the Cloud

Github link for MCP Server : https://github.com/shadabshaukat/postgres-mcp-server/

Article first appeared here

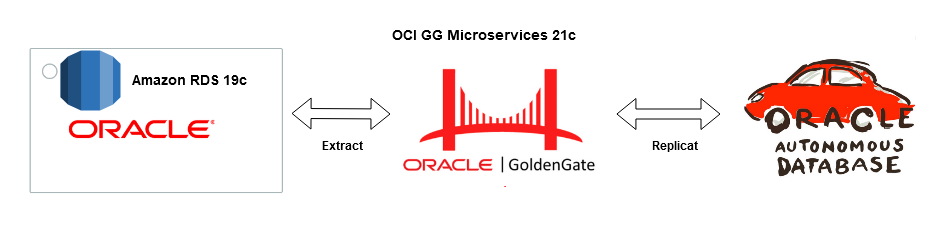

Goldengate Microservices 21c is the latest version of the microservices architecture which makes creating data mesh and data fabric across different public clouds as easy as a few clicks. Goldengate is available on OCI as a fully managed service with auto-scaling. It does not.require installation of Goldengate software at either the source or Target db instances. Goldengate uses a capture and apply mechanism for replication using trail files. Both the extract (capture) and replicat (apply) processes run on the Goldengate replication instance which acts as a hub.

Let us go ahead and create a data pipeline for replicating Data in real-time using Oracle Cloud Infrastructure (OCI) Goldengate 21c from Amazon RDS Oracle Instance to an Oracle Autonomous database in OCI. Below are some of the common use cases for this solution :

Source : Amazon RDS Oracle 19c EE

Target : OCI Autonomous Transaction Processing 19c

Replication Hub : OCI Goldengate 21c Microservices

Network : Site-to Site IPsec VPN or Fastconnect (Direct Connect on AWS)

The solution is broadly divided into four phases :

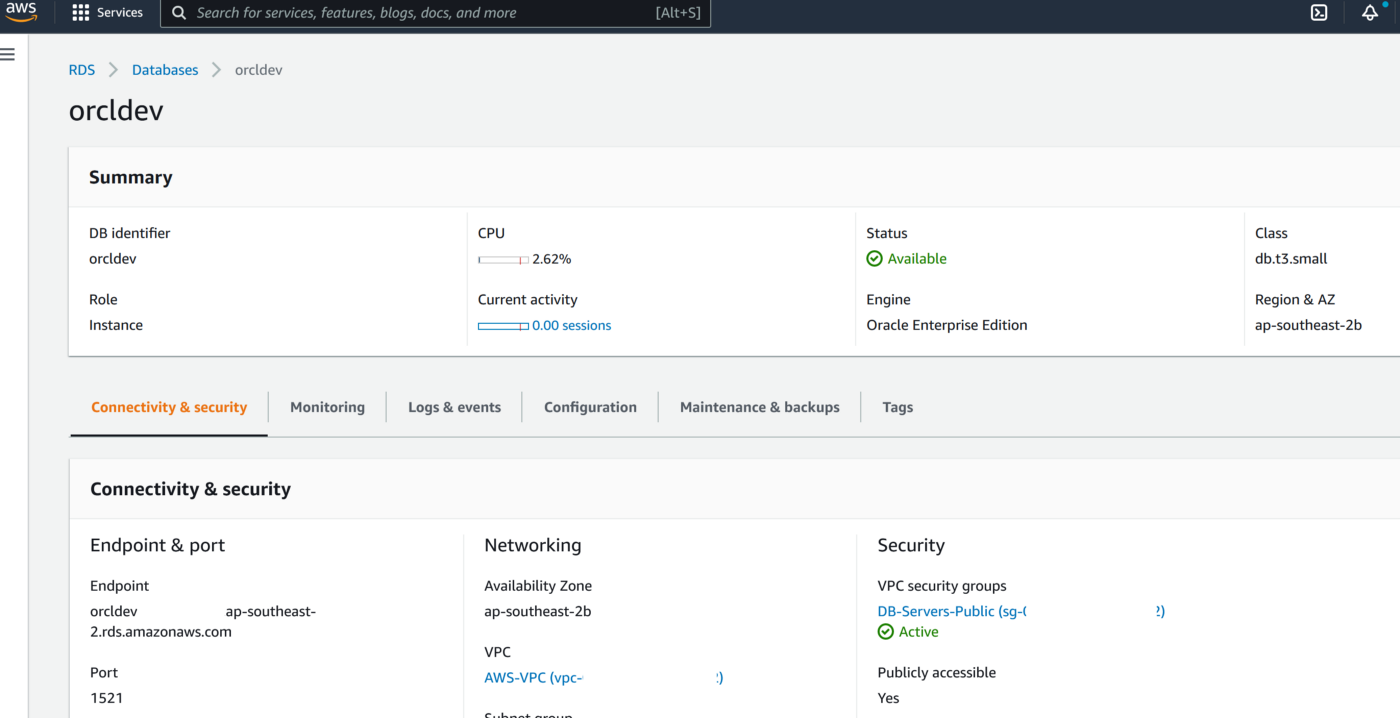

Phase 1 — AWS Setup : RDS Source and Enable Goldengate Capture

The first part of the setup requires us to provision a VPC, Subnet Group and Oracle 19c RDS Instance on AWS. Please ensure all the requistie Network constructs like security groups are in place for connectivity from OCI Goldengate to RDS. In a production scenario it would be betetr to have the RDS instance without a public endpoint and have a Fastconnect setup from AWS to OCI

2. Create RDS Oracle Instance 19.1 EE with super user as ‘admin’

3. Create a new DB Parameter Group for 19.1 EE with parameter ENABLE_GOLDENGATE_REPLICATION set to TRUE

4. Change the parameter group of the RDS instance and reboot the RDS Oracle instance once the parameter group has been applied. Double-check to confirm the parameter ENABLE_GOLDENGATE_REPLICATION is set to True and the correct parameter group is applied to the RDS isntance

5. Set the log retention period on the source DB with ‘admin’ user

exec rdsadmin.rdsadmin_util.set_configuration('archivelog retention hours',24);commit;

6. Create a new user account to be used for Goldengateon the RDS instance with ‘admin’ user

CREATE TABLESPACE administrator;

CREATE USER oggadm1 IDENTIFIED BY “*********” DEFAULT TABLESPACE ADMINISTRATOR TEMPORARY TABLESPACE TEMP;

commit;

7. Grant account privileges on the source RDS instance with ‘admin’ user

GRANT CREATE SESSION, ALTER SESSION TO oggadm1;

GRANT RESOURCE TO oggadm1;

GRANT SELECT ANY DICTIONARY TO oggadm1;

GRANT FLASHBACK ANY TABLE TO oggadm1;

GRANT SELECT ANY TABLE TO oggadm1;

GRANT SELECT_CATALOG_ROLE TO admin WITH ADMIN OPTION;

exec rdsadmin.rdsadmin_util.grant_sys_object (‘DBA_CLUSTERS’, ‘OGGADM1’);

exec rdsadmin.rdsadmin_util.grant_sys_object (‘DBA_CLUSTERS’, ‘ADMIN’);

GRANT EXECUTE ON DBMS_FLASHBACK TO oggadm1;

GRANT SELECT ON SYS.V_$DATABASE TO oggadm1;

GRANT ALTER ANY TABLE TO oggadm1;

grant unlimited tablespace TO oggadm1;

grant EXECUTE_CATALOG_ROLE to admin WITH ADMIN OPTION;

commit;

8. Finally, grant the privileges needed by a user account to be a GoldenGate administrator. The package that you use to perform the grant, dbms_goldengate_auth or rdsadmin_dbms_goldengate_auth, depends on the Oracle DB engine version.

— With admin user on RDS Oracle instance for Oracle Database version lower than 12.2 —

exec dbms_goldengate_auth.grant_admin_privilege (grantee=>’OGGADM1′,privilege_type=>’capture’,grant_select_privileges=>true, do_grants=>TRUE);

exec dbms_goldengate_auth.grant_admin_privilege(‘OGGADM1′,container=>’all’);

exec dbms_goldengate_auth.grant_admin_privilege(‘OGGADM1’);

commit;

— For Oracle DB versions that are later than or equal to Oracle Database 12c Release 2 (12.2), which requires patch level 12.2.0.1.ru-2019–04.rur-2019–04.r1 or later, run the following PL/SQL program.

exec rdsadmin.rdsadmin_dbms_goldengate_auth.grant_admin_privilege (grantee=>’OGGADM1′, privilege_type=>’capture’,grant_select_privileges=>true, do_grants=>TRUE);

commit;

To revoke privileges, use the procedure revoke_admin_privilege in the same package.

9. TNS entry for AWS RDS Instance

OGGTARGET=(DESCRIPTION=(ENABLE=BROKEN)(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=orcl.*****.ap-southeast-2.rds.amazonaws.com)(PORT=1521)))(CONNECT_DATA=(SID=ORCL)))– To be added to Registered Database in OCI –(DESCRIPTION=(ENABLE=BROKEN)(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=orcl.****.ap-southeast-2.rds.amazonaws.com)(PORT=1521)))(CONNECT_DATA=(SID=ORCL)))

Alias (to be used later in OCI GG configuration) : ORCLAWS

10. Create Test Table in RDS Oracle Instance

CREATE TABLE oggadm1.test (id number,name varchar2(100));

insert into oggadm1.test values (1,’Shadab’);

insert into oggadm1.test values (2,’Mohammad’);

commit;

11. Enable supplemental logging on with Admin user

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

— Enable Force logging —

EXEC rdsadmin.rdsadmin_util.force_logging(p_enable => true);

— Enable Supplemental logging —

begin rdsadmin.rdsadmin_util.alter_supplemental_logging(p_action => ‘ADD’);

end;

/

Phase 2 — OCI Setup : Autonomous Database

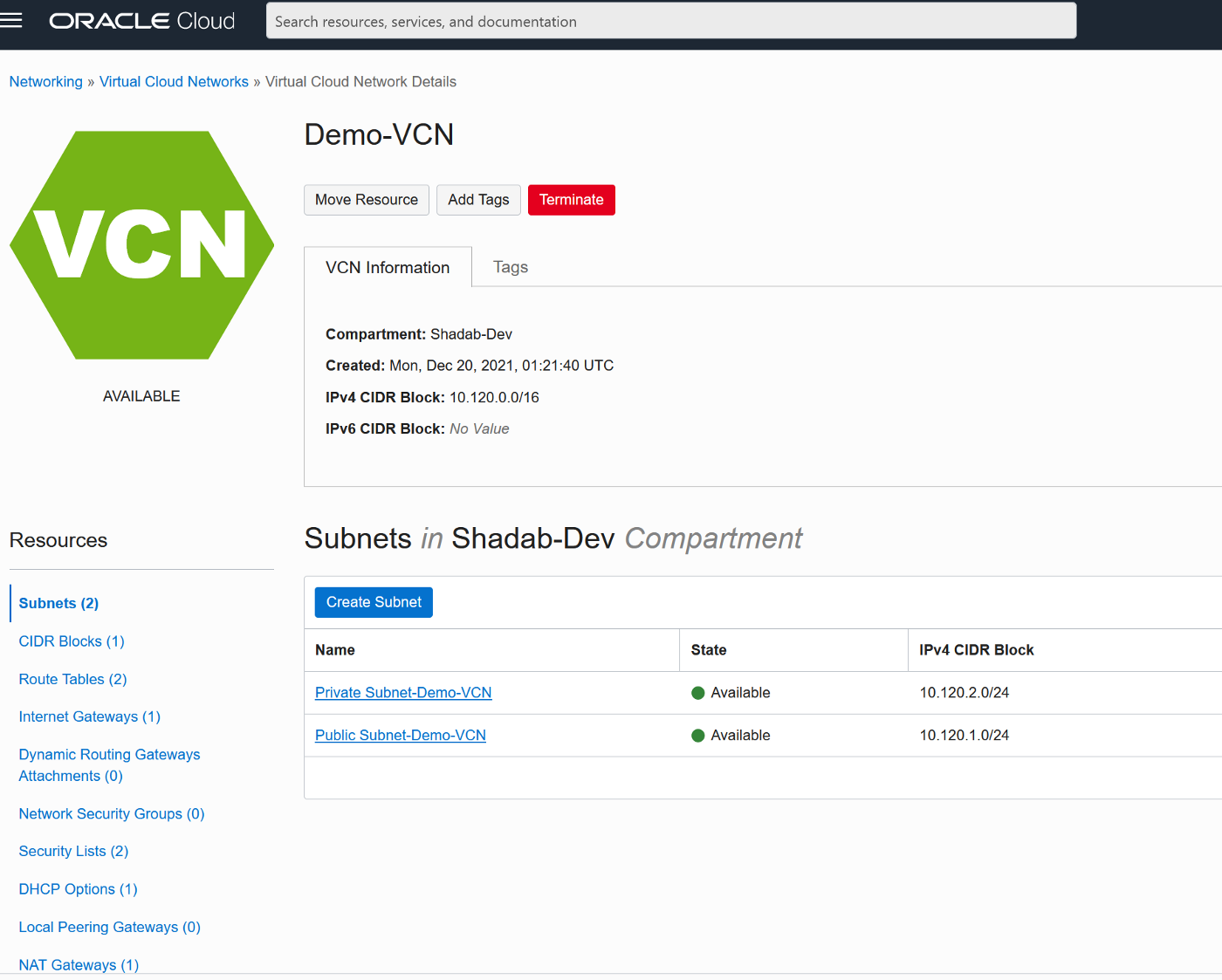

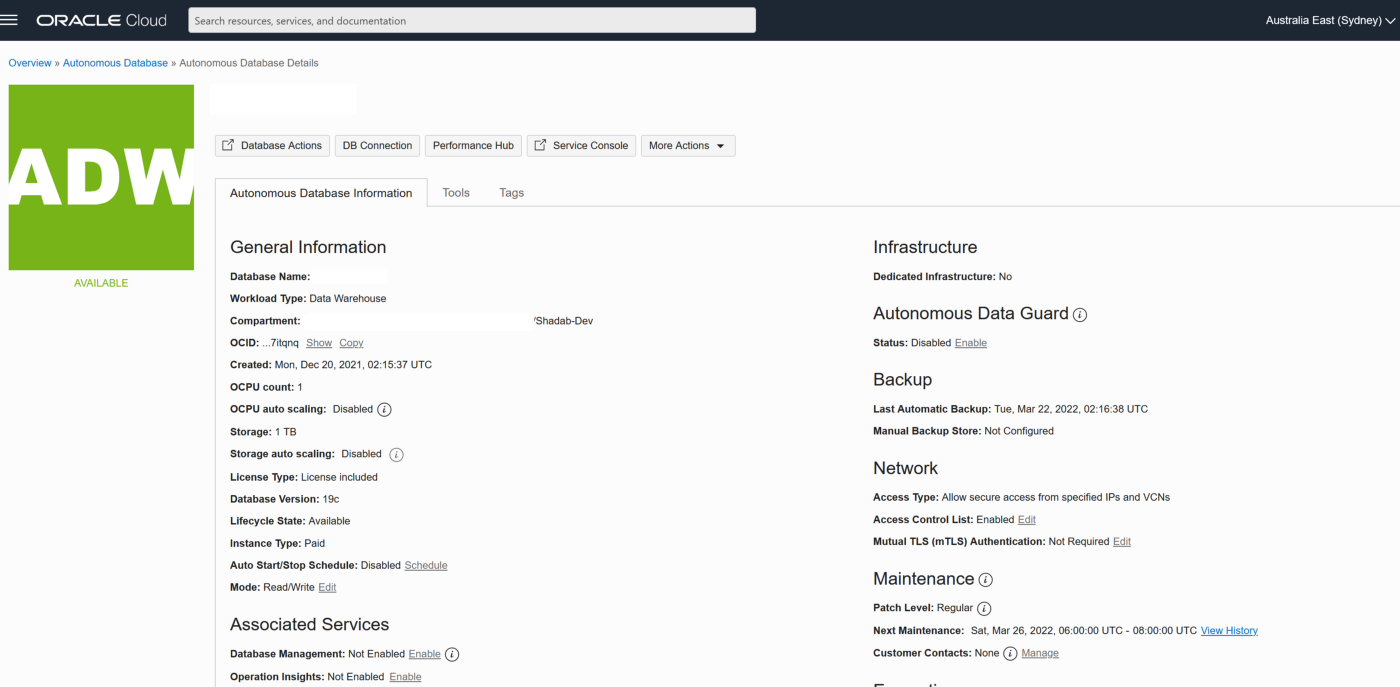

We will provision the VCN, Autonomous Database on OCI and enable the goldengate replication user

2. Create Autonomous Transaction Processing Database with Network Options and mTLS not required

3. Unlock ggadmin user in the ATP

alter user ggadmin identified by ****** account unlock;

4. Create Table ‘test’ in admin schema and do initial load (Normally this has to be done using data pump but it is beyond the scope of this article)

CREATE TABLE test (id number,name varchar2(100));

insert into test values (1,’Shadab’);

insert into test values (2,’Mohammad’);

commit;

select * from test;

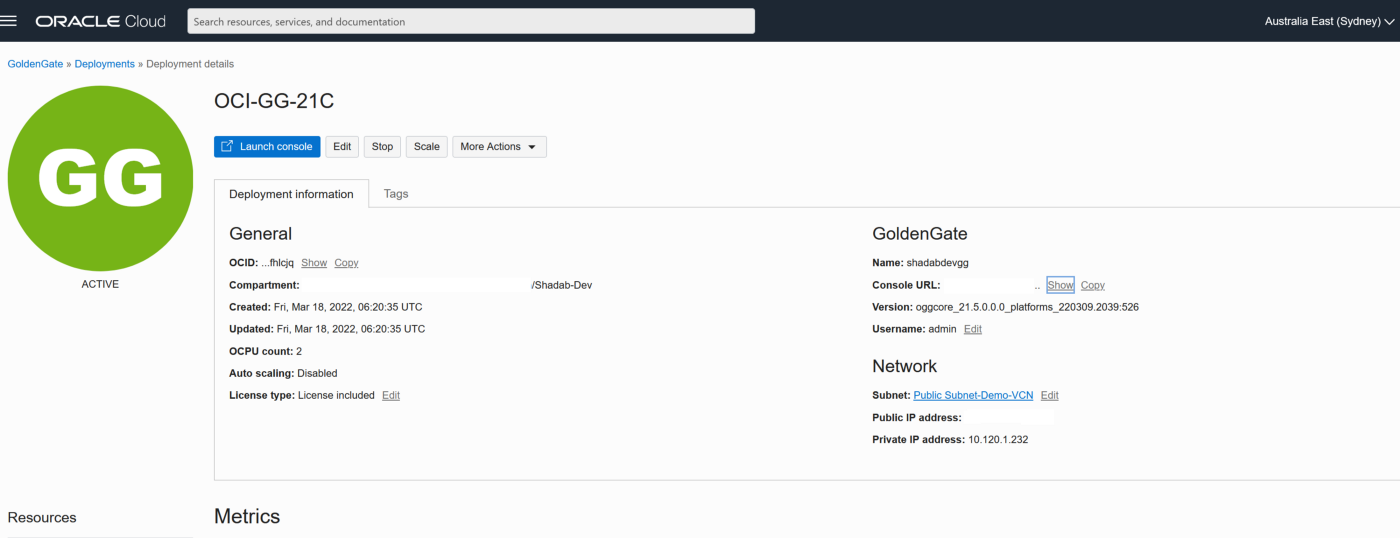

Phase 3 — OCI Setup : Goldengate

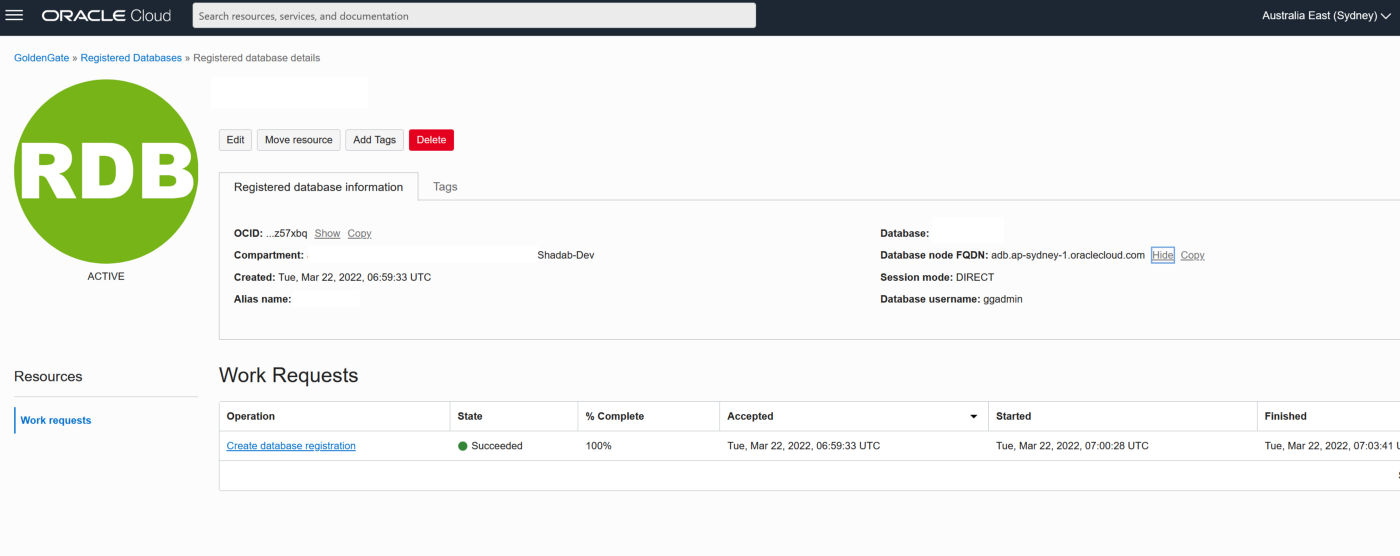

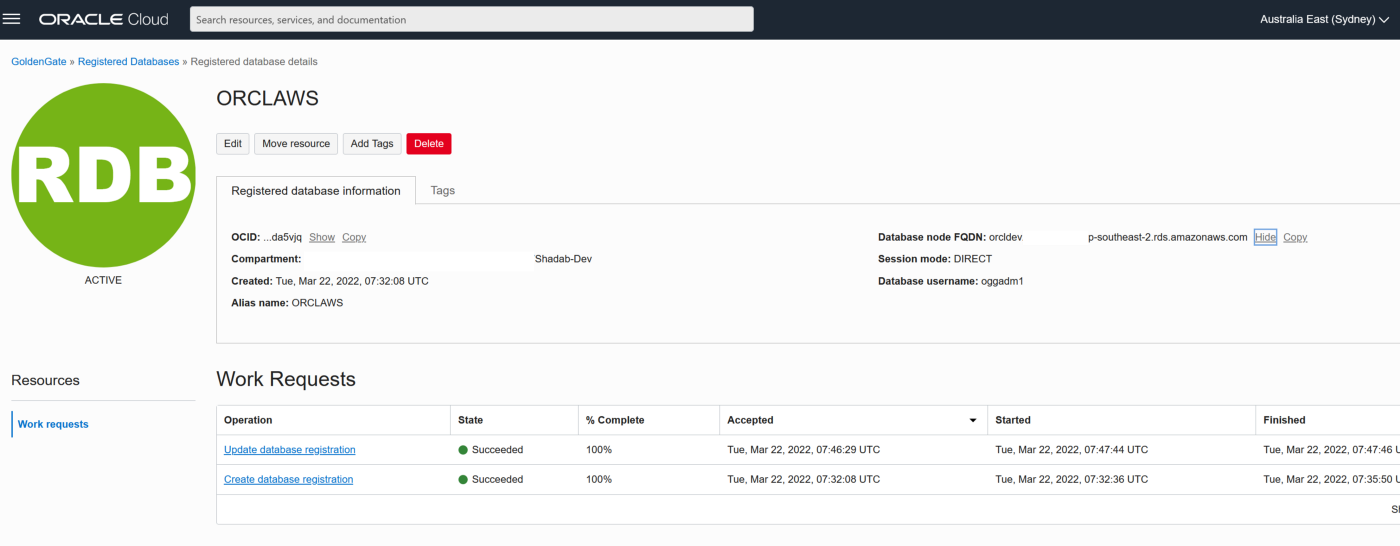

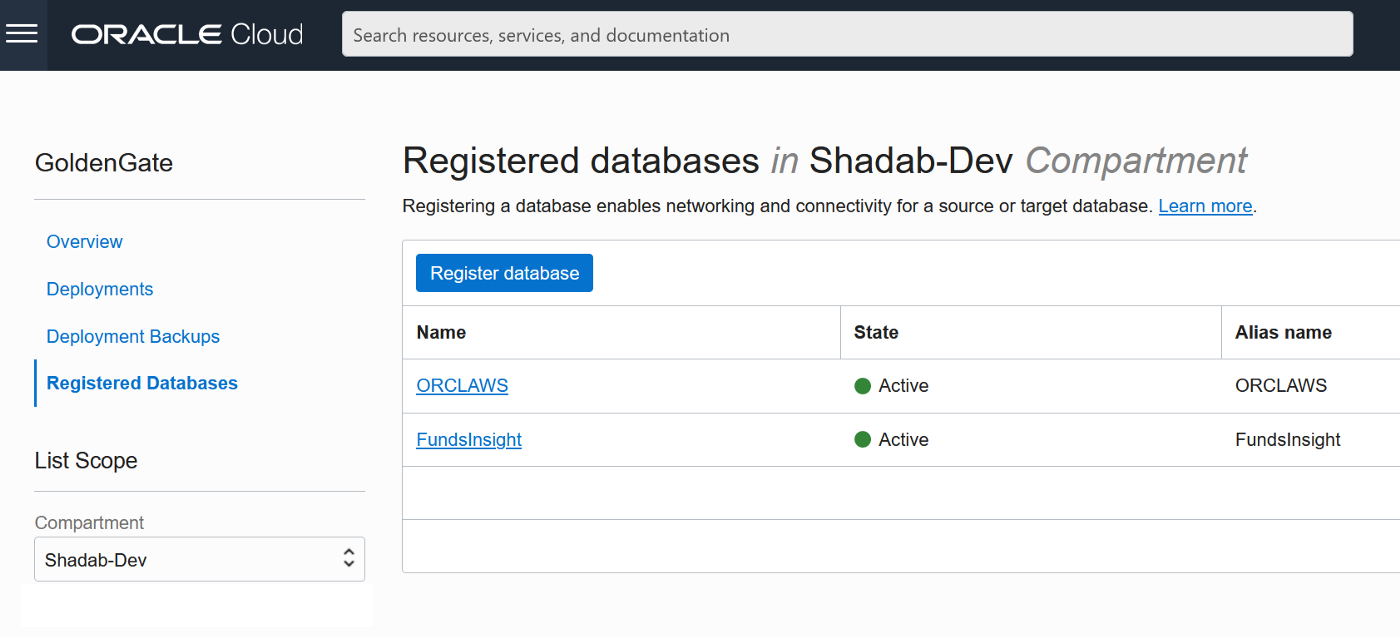

2. Go to Oracle Database > Goldengate > Registered Databases

a. Add the ATP database created above with the ggadmin user

b. Add the RDS instance database using oggadm1 user

3. Test the connectivity to both databases , it should in console as Active

4. Go the launch URL for the Goldengate deployment username and password as per step 1.

eg : https://e*******q.deployment.goldengate.ap-sydney-1.oci.oraclecloud.com/

1. Create an Integrated Extract from Administration Service, click on the plus symbol next to the extract section

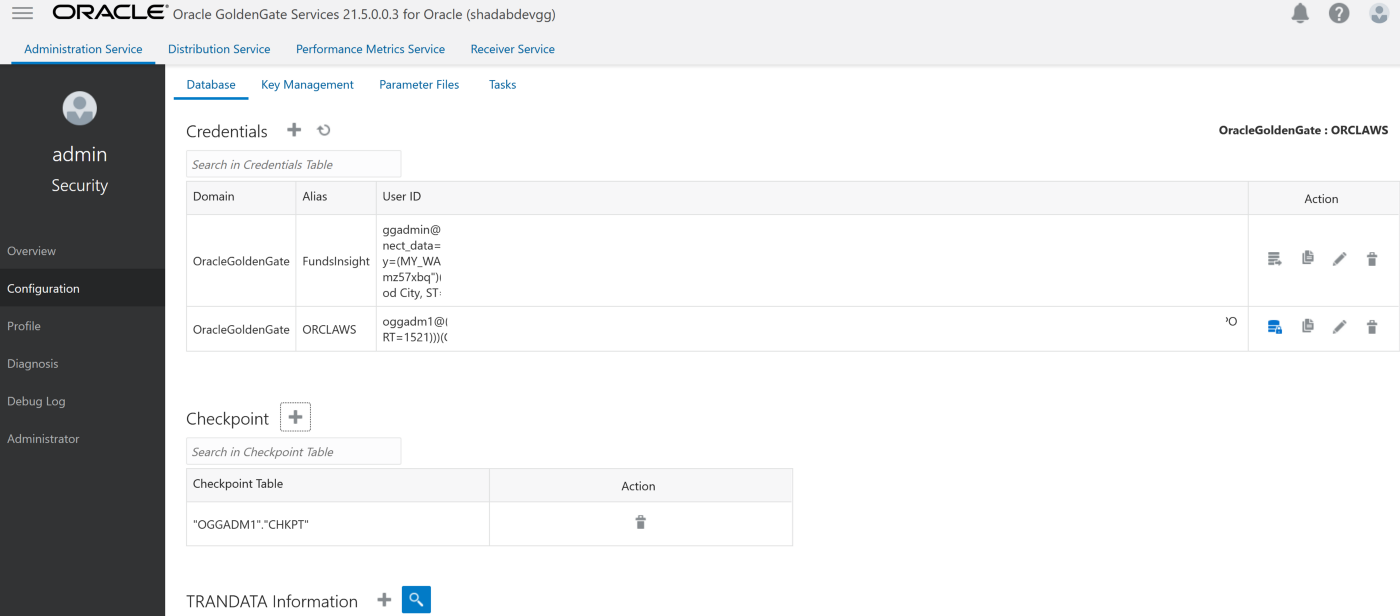

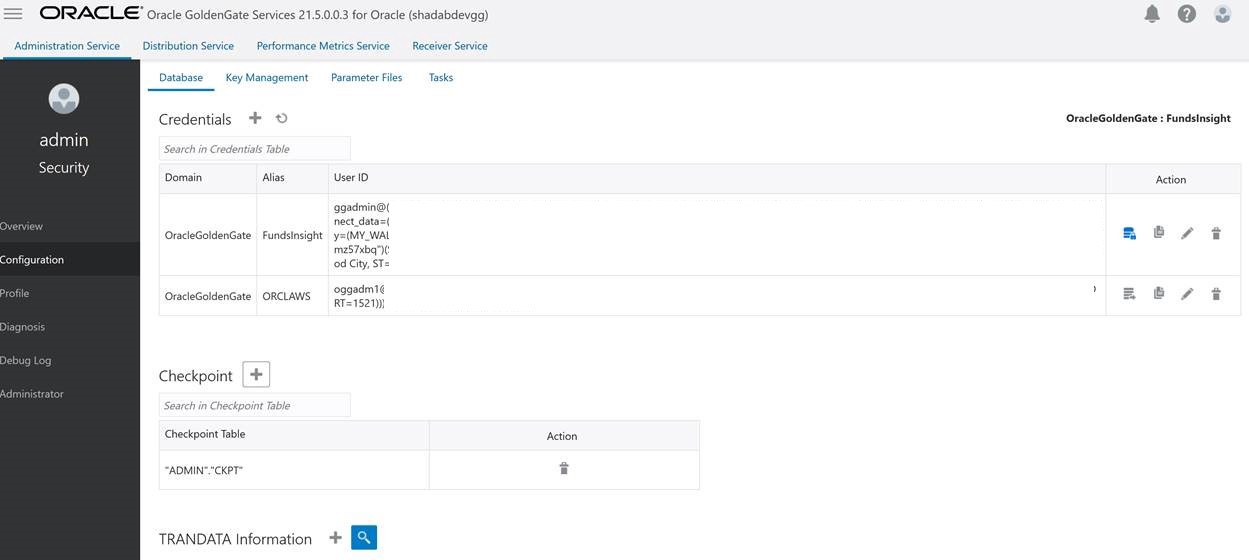

Go to Main Page > Configuration > Login to AWS RDS instance

a. Create Checkpoint table oggadm1.ckpt

b. Add Tran Data for Schema oggadm1

EXTRACT AWSEXT

USERIDALIAS ORCLAWS DOMAIN OracleGoldenGate

EXTTRAIL AW

TABLE OGGADM1.*;

2. Create Non-integrated replicat for ADB on trail file ‘aw’. click on the plus symbol next to the Replicat section

Go to Main Page > Configuration > Login to ATP instance

a. Create Checkpoint table admin.ckpt

b. Add Tran Data for Schema admin

c. Add heartbeat table

REPLICAT adbrep

USERIDALIAS FundsInsight DOMAIN OracleGoldenGate

MAP OGGADM1.TEST, TARGET ADMIN.TEST;

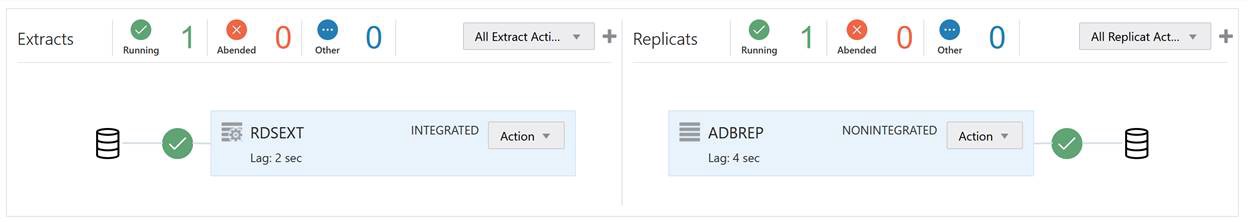

The status should be green on the OCI Goldengate Administration Dashboard

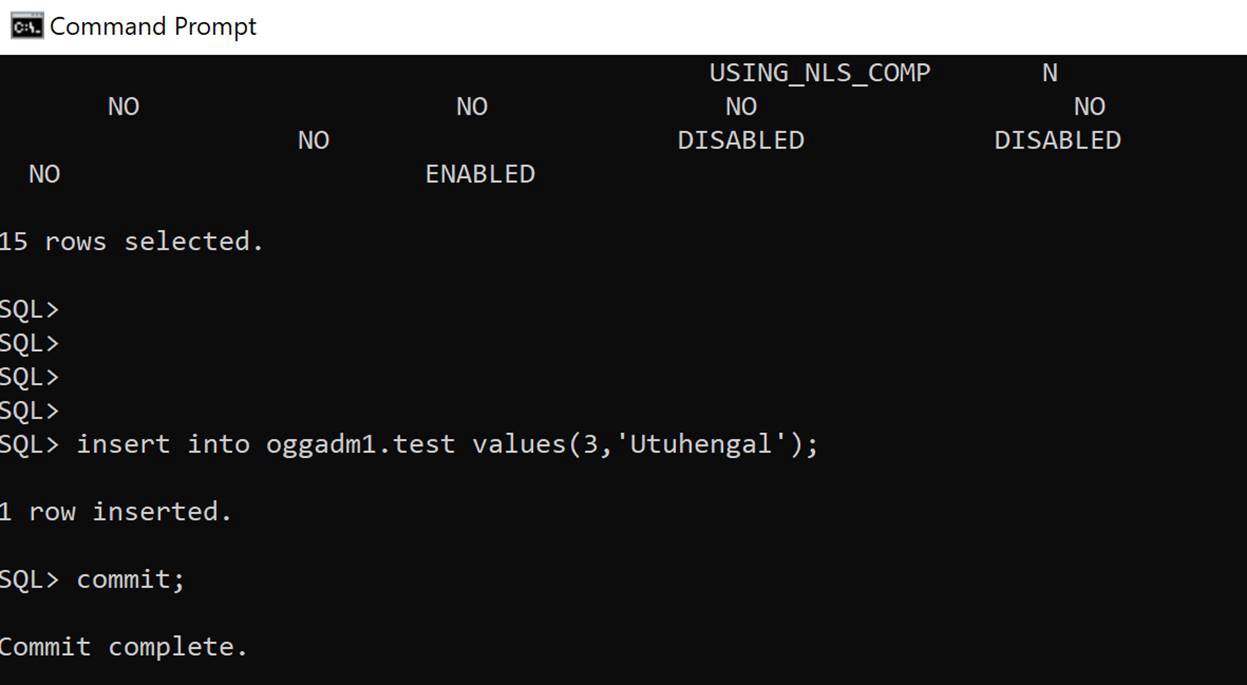

3. Insert transaction at RDS source

insert into oggadm1.test values(3,'Utuhengal');commit;

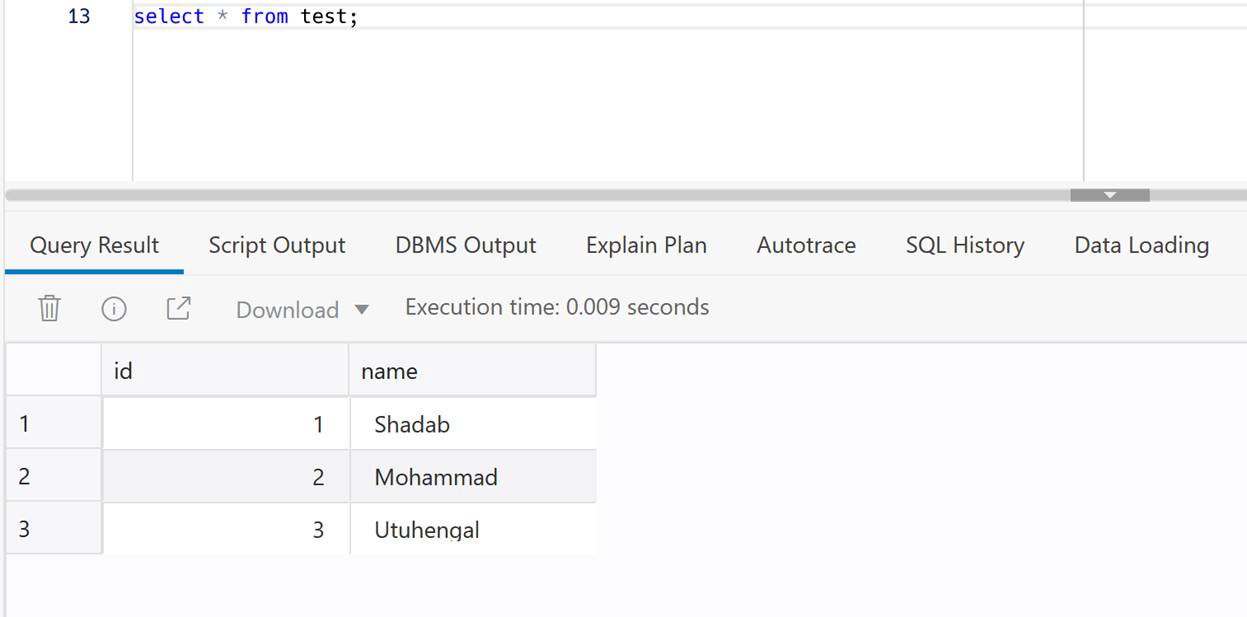

4. Check at ADB Target

select * from test;

We have created cross-cloud replication from an Oracle Database sitting inside AWS to an Oracle Autonomous Database running on OCI. The idea was to demonstrate the capability and ease of Goldengate Microservices to run a a replication hub on OCI and let you create real-time change data capture across two different public clouds. Every component used in this architecture is a fully managed service without the need of managing any servers or installing any agents on either source or target as they are fully managed cloud services without access to under-lying host.

PostgreSQL is the most popular Open source database and there is a lot of information available when it comes to backing up and restoring PgSQL I have used these scripts to backup production databases and restored them to new Postgres Servers. So here it goes

Backup PostgreSQL Database – Backup_Pgsql.sh

#!/bin/bash

hostname=`hostname`

# Dump DBs

date=`date +"%Y%m%d_%H%M%N"`

backupdir='/home/opc'

dbname='demo'

filename="$backupdir/${hostname}_${dbname}_${date}"

pg_dump -U postgres --encoding utf8 -F c -f $filename.dump $dbnameRestore PostgreSQL Database – Restore_Pgsql.sh

#!/bin/bash

# Restore DB

filename='/home/opc/pgimportmaster-demo-20211129_1013.dump'

pg_restore -U postgres -d demo -c < ./$1

exit 0

Usage for Restore

$ ./Restore_Pgsql.sh pgimportmaster-demo-20211129_1013.dumpThis document addresses the specific configuration points that needs to be in place in order to build and store a Hive mestastore outside an EMR cluster in a RDS MySQL database. It also covers the steps to connect a Redshift cluster to an EMR cluster so Redshift can create and access the tables stored within the external metastore.

Resources Used:

• Redshift Cluster

• RDS MySQL Instance

• EMR Cluster

Note: All resources must be in same VPC and same region for this practice.

Creating the RDS MySQL:

1 – First, start creating a RDS MySQL instance if you don’t have one already. Open AWS RDS Console and create an MySQL instance that will be used during this practice.

Note: Please make note of RDS security group, endpoint, Master User and Master Password. We will need that information later on.

2 – Once the RDS MySQL instance is created, modify its security groups to add a rule for All traffic on all Port Range to be allowed from the VPC’s default security group.

Note: This VPC’s default Security Group will be used while creating the EMR cluster later on as well but it needs to be whitelisted beforehand otherwise the EMR launching will fail while trying to reach out to the RDS MySQL.

Before creating the EMR Cluster:

3 – After creating the RDS MySQL (and open its security group to EMR) but right before creating the EMR cluster, a JSON configuration file needs to be created. This file will be ingested by EMR during the bootstrapping phase of EMR’s creation, it will basically tell EMR how to access the remote RDS MySQL database.

4 – Copy the JSON property structure from the following link (use Copy icon): https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-hive-metastore-external.html

5 – Paste it in a text editor and modify it carefully with the RDS details you noted earlier.

Note: Be careful, the value property can not contain any spaces or carriage returns. It should appear all on one line. Save it as “hiveConfiguration.json”.

6 – The final JSON configuration file should look like the following:

[

{

“Classification”: “hive-site”,

“Properties”: {

“javax.jdo.option.ConnectionURL”: “jdbc:mysql:\/\/database-1.cefjr3enh3dk.us-east-2.rds.amazonaws.com:3306\/hive?createDatabaseIfNotExist=true“,

“javax.jdo.option.ConnectionDriverName”:”org.mariadb.jdbc.Driver”,

“javax.jdo.option.ConnectionUserName”:”admin“,

“javax.jdo.option.ConnectionPassword”: “*********“

}

}

]

Note 1: replace <hostname>, <username>, <password> with your own details:

Note 2: The part “hive?createDatabaseIfNotExist=true” determines the name of the database to be created in the MySQL RDS, in this case the database will be called “hive”.

7 – After creating above file, upload it to an S3 bucket/folder of your choice (in the same region of your resources).

Creating the EMR:

8 – Now, it is time to create the EMR cluster. To do this, open AWS EMR console and click Create Cluster button. This will prompt the Quick Options page but we won’t be using that. Click on Go to advanced options on the top of the page.

9 – This will send you to the Advanced Options page. There, under Software Configuration, select the following Applications:

Hadoop, Ganglia, Hive, Hue, Tez, Pig, Mahout

10 – In the same page, under Edit Software Settings section, click Load JSON from S3 and select the S3 bucket/path where you uploaded the previous created file “hiveConfiguration.json“. Select the file there and hit Select.

11 – In the Hardware Configuration page, make sure that the EMR cluster is in the same VPC as your MySQL RDS instance. Hit Next if you don’t want to change any Network configuration or Node types.

12 – Hit Next in the General Options page if you don’t want to change anything, although you might want to change the name of your EMR cluster here.

13 – In the next page, Security Options, make sure you have an EC2 Key Pair in that region and select it. Otherwise, create one!

Note: Create one now (if you don’t have one) before creating the EMR as you CAN’T add it later!!!

14 – Still in the Security Options page, expand the EC2 security groups panel and change both, Master and Core & Task instances to use the VPC’s default security group (the same whitelisted in the RDS MySQL security group earlier).

15 – Hit Create cluster and wait the EMR to be created. It will take some time…

Confirming that the metastore was created in the RDS MySQL

16 – Once the EMR is created, another rule needs to be added to the VPC’s default security group, one that allows SSHing into the EMR cluster on port 22 from your local IP. It should look like the following:

17 – With the right rules in place, try to connect to your EMR cluster from your local machine:

– – – chmod 600 article_key.pem

– ssh -i article_key.pem hadoop@ec2-18-XX-XX-XX.us-east-2.compute.amazonaws.com

18 – EMR has a MySQL client installed, use this client to connect to your MySQL database and perform few tests such as if the Security Groups are working properly and if the “hive” database was created properly

Note: You can do a telnet test from within EMR box as well to test Security Group access.

19 – To connect to the RDS MySQL, run the following command from your EMR box:

mysql -h <rds-endpoint> -P 3306 -u <rds master user> -p <rds master password>

Example: mysql -h database-1.cefjr3enh3dk.us-east-2.rds.amazonaws.com -P 3306 -u admin123 -pPwD12345

20 – Once connected, use the following commands to verify if the Hive metastore was indeed created in the RDS. You should be able to see a database named “hive” there:

show databases; à Lists all databases – “hive” should be there

use hive; à Connects you to “hive” database

show tables; à Lists all the meta tables within hive database

select * from TBLS; à Lists all tables created in hive. At this point there’s none

Setting up necessary Spectrum Roles and Network requirements for Redshift and EMR

Note 1: Following steps assume that you already have a Redshift cluster and that you can connect to it. It will not guide you on how to create and access the Redshift cluster.

Note 2: Since EMR, RDS MySQL share the same VPC’s default security group, they should be able to communicate to each other already. If that’s the case, you can skip Step 22 and go straight to Step 23, otherwise, If EMR and Redshift use different security groups, please do the step 22 first.

21 – Create a Role for Spectrum and attach it to your Redshift cluster. Follow the instructions here:

• To Create the Role: https://docs.aws.amazon.com/redshift/latest/dg/c-getting-started-using-spectrum-create-role.html

• To Associate the Role: https://docs.aws.amazon.com/redshift/latest/dg/c-getting-started-using-spectrum-add-role.html

22 – (Optional) Now that Redshift can access S3, Redshift also needs to access EMR cluster and vice-versa. Follow the steps listed under section “Enabling Your Amazon Redshift Cluster to Access Your Amazon EMR Cluster” in the following link: https://docs.aws.amazon.com/redshift/latest/dg/c-spectrum-external-schemas.html#c-spectrum-enabling-emr-access

Note: In summary, this creates an EC2 security group with Redshift’s Security Group and the EMR’s master node’s security groups inside it. Redshift’s Security Group must allow TCP in every port (0 – 65535) while EMR’s Security Group must allow TCP in port 9083 (Hive’s default). Next, you attach this newly created security group to both of your Redshift and EMR clusters.

23 – Once this is done, you should now be able to create the External Schema in Redshift, query the external tables from Redshift and also be able to create/see the schemas/tables from EMR Hive as well. However, at this point there’s no tables created yet.

Creating Tables on Hive First

24 – Log to Hive console and run the following:

> show databases;

default (that’s the only database so far)

> create external table hive_table (col1 int, col2 string)

ROW FORMAT DELIMITED FIELDS TERMINATED BY ‘|’

location ‘s3://<your_bucket>/<your_folder>/‘;

> show tables;

hive_table (that’s the table we just created)

25 – Log back to your MySQL database and run the following commands:

show databases; à Lists all databases – “hive” should be there

use hive; à Connects you to “hive” database

show tables; à Lists all the meta tables within hive database

select * from TBLS; à Lists all tables created in hive, “hive_table” is there!

Note: Now you will be able to see the newly created table “hive_table” showing on your External MySQL catalog.

Creating Schemas and Tables on Redshift Now

26 – On Redshift side, an External Schema must be created first before creating or querying the Hive tables, like following:

CREATE EXTERNAL SCHEMA emr_play à It can be any name, that’s a schema valid only for Redshift.

FROM HIVE METASTORE DATABASE ‘default’ à Use default database to match the database we have in Hive.

URI ‘172.XXX.XXX.XXX‘ PORT 9083 à EMR’s Private IP of the Master Instance. Hive’s default port is 9083.

IAM_ROLE ‘arn:aws:iam::000000000000:role/spectrum‘; à A valid Spectrum Role attached Redshift.

27 – Create the table(s):

create external table emr_play.redshift_table (col1 int, col2 varchar)

ROW FORMAT DELIMITED FIELDS TERMINATED BY ‘|’

location ‘s3://<your_bucket>/<your_folder>/‘;

28 – Simply query the table now:

select * from emr_play.redshift_table;

29 – One more time, log back to your MySQL database and run the following commands again:

show databases; à Lists all databases – “hive” should be there

use hive; à Connects you to “hive” database

show tables; à Lists all the meta tables within hive database

select * from TBLS; à Lists all tables created in hive, both tables are there!

Note: You should be able to see the both Hive and Redshift tables now showing on your External MySQL catalog. You can also query the tables and create new tables on both Hive and Redshift side.

In this tutorial we will create a Python script which will build a data pipeline to load data from Aurora MySQL RDS to an S3 bucket and copy that data to a Redshift cluster.

One of the assumptions is you have basic understanding of AWS, RDS, MySQL, S3, Python and Redshift. Even if you don’t it’s alright I will explain briefly about each of them to the non-cloud DBA’s

AWS- Amazon Web Services. It is the cloud infrastructure platform from Amazon which can be used to build and host anything from a static website to a globally scalable service like Netflix

RDS – Relational Database Service or RDS or short is Amazons managed relational database service for databases like it’s own Aurora, MySQL, Postgres, Oracle and SQL Server

S3- Simple Storage Service is AWS’s distributed storage which can scale almost infinitely. Data in S3 is stored in Buckets. Think of buckets as Directories but DNS name compliant and cloud hosted

Python – A programming language which is now the defacto standard for data science and engineering

Redshift- AWS’s Petabyte scale Data warehouse which is binary compatible to PostgreSQL but uses a columnar storage engine

The source in this tutorial is a RDS Aurora MySQL database and target is a Redshift cluster. The data is staged in an S3 bucket. With Aurora MySQL you can unload data directly to a S3 bucket but in my script I will offload the table to a local filesystem and then copy it to the S3 bucket. This will give you flexibility in-case you are not using Aurora but a standard MySQL or Maria DB

Environment:

Make sure both the RDS Aurora MySQL and Redshift cluster has security groups which have have IP of the Ec2 instance for inbound connections (Host and Port)

Aurora MySQL 5.6

CREATE TABLE `employee` (

`id` int(11) NOT NULL,

`first_name` varchar(45) DEFAULT NULL,

`last_name` varchar(45) DEFAULT NULL,

`phone_number` varchar(45) DEFAULT NULL,

`address` varchar(200) DEFAULT NULL,

PRIMARY KEY (`id`)

) ENGINE=InnoDB DEFAULT CHARSET=latin1;

Redshift

DROP TABLE IF EXISTS employee CASCADE;

CREATE TABLE employee

(

id bigint NOT NULL,

first_name varchar(45),

last_name varchar(45),

phone_number bigint,

address varchar(200)

);

ALTER TABLE employee

ADD CONSTRAINT employee_pkey

PRIMARY KEY (id);

COMMIT;

2. Install Python 3.7.2 and install all the packages needed by the script

sudo /usr/local/bin/python3.7 -m pip install boto3

sudo /usr/local/bin/python3.7 -m pip install psycopg2-binary

sudo /usr/local/bin/python3.7 -m pip install pymysql

sudo /usr/local/bin/python3.7 -m pip install json

sudo /usr/local/bin/python3.7 -m pip install pymongo

3. Insert sample data into the source RDS Aurora DB

$ mysql -u awsuser -h shadmha-cls-aurora.ap-southeast-2.rds.amazonaws.com -p dev

INSERT INTO `employee` VALUES (1,'shadab','mohammad','04447910733','Randwick'),(2,'kris','joy','07761288888','Liverpool'),(3,'trish','harris','07766166166','Freshwater'),(4,'john','doe','08282828282','Newtown'),(5,'mary','jane','02535533737','St. Leonards'),(6,'sam','rockwell','06625255252','Manchester');

SELECT * FROM employee;

4. Download and Configure AWS command line interface

The AWS Python SDK boto3 requires AWS CLI for the credentials to connect to your AWS account. Also for uploading the file to S3 we need boto3 functions. Install AWS CLI on Linux and configure it.

$ aws configure

AWS Access Key ID [****************YGDA]:

AWS Secret Access Key [****************hgma]:

Default region name [ap-southeast-2]:

Default output format [json]:

5. Python Script to execute the Data Pipeline (datapipeline.py)

import boto3

import psycopg2

import pymysql

import csv

import time

import sys

import os

import datetime

from datetime import date

datetime_object = datetime.datetime.now()

print ("###### Data Pipeline from Aurora MySQL to S3 to Redshift ######")

print ("")

print ("Start TimeStamp")

print ("---------------")

print(datetime_object)

print ("")

# Connect to MySQL Aurora and Download Table as CSV File

db_opts = {

'user': 'awsuser',

'password': '******',

'host': 'shadmha-cls-aurora.ap-southeast-2.rds.amazonaws.com',

'database': 'dev'

}

db = pymysql.connect(**db_opts)

cur = db.cursor()

sql = 'SELECT * from employee'

csv_file_path = '/home/centos/my_csv_file.csv'

try:

cur.execute(sql)

rows = cur.fetchall()

finally:

db.close()

# Continue only if there are rows returned.

if rows:

# New empty list called 'result'. This will be written to a file.

result = list()

# The row name is the first entry for each entity in the description tuple.

column_names = list()

for i in cur.description:

column_names.append(i[0])

result.append(column_names)

for row in rows:

result.append(row)

# Write result to file.

with open(csv_file_path, 'w', newline='') as csvfile:

csvwriter = csv.writer(csvfile, delimiter='|', quotechar='"', quoting=csv.QUOTE_MINIMAL)

for row in result:

csvwriter.writerow(row)

else:

sys.exit("No rows found for query: {}".format(sql))

# Upload Generated CSV File to S3 Bucket

s3 = boto3.resource('s3')

bucket = s3.Bucket('mybucket-shadmha')

s3.Object('mybucket-shadmha', 'my_csv_file.csv').put(Body=open('/home/centos/my_csv_file.csv', 'rb'))

#Obtaining the connection to RedShift

con=psycopg2.connect(dbname= 'dev', host='redshift-cluster-1.ap-southeast-2.redshift.amazonaws.com',

port= '5439', user= 'awsuser', password= '*********')

#Copy Command as Variable

copy_command="copy employee from 's3://mybucket-shadmha/my_csv_file.csv' credentials 'aws_iam_role=arn:aws:iam::775888:role/REDSHIFT' delimiter '|' region 'ap-southeast-2' ignoreheader 1 removequotes ;"

#Opening a cursor and run copy query

cur = con.cursor()

cur.execute("truncate table employee;")

cur.execute(copy_command)

con.commit()

#Close the cursor and the connection

cur.close()

con.close()

# Remove the S3 bucket file and also the local file

DelLocalFile = 'aws s3 rm s3://mybucket-shadmha/my_csv_file.csv --quiet'

DelS3File = 'rm /home/centos/my_csv_file.csv'

os.system(DelLocalFile)

os.system(DelS3File)

datetime_object_2 = datetime.datetime.now()

print ("End TimeStamp")

print ("-------------")

print (datetime_object_2)

print ("")

6. Run the Script or Schedule in Crontab as a Job

$ python3.7 datapipeline.py

Crontab to execute Job daily at 10:30 am

30 10 * * * /usr/local/bin/python3.7 /home/centos/datapipeline.py &>> /tmp/datapipeline.log

7. Check the table in destination Redshift Cluster and all the records should be visible their

SELECT * FROM employee;

This tutorial was done using a small table and very minimum data. But with S3’s distributed nature and massive scale and Redshift as a Data warehouse you can build data pipelines for very large datasets. Redhsift being an OLAP database and Aurora OLTP, many real-life scenarios requires offloading data from your OLTP apps to data warehouses or data marts to perform Analytics on it.

AWS also has an excellent managed solution called Data Pipelines which can automate the movement and transform of Data. But many a times for developing customized solutions Python is the best tool for the job.

Enjoy this script and please let me know in your comments or on Twitter (@easyoradba) if you have any issues or what else would you like me to post for data engineering.

Amazon Web Services has been gaining popularity in the last few years since cloud computing has been in the spotlight. Slowly the Traditional Enterprises are making the journey to the cloud. Oracle is considered one of the most mission critical application in the Enterprise. Moving Oracle Database to cloud can bring its own benefits both from an operational and financial perspective.

In this exercise we will move an on-premise Oracle DB schema to an AWS RDS Instance running Oracle 12cR1

Pre-requisites :

1. You already have a source Oracle database installed

2. You know how to provision an AWS RDS Oracle Instance

3. You have access to both instances

4. You have basic understanding of AWS S3 and AWS console

5. You have the latest version of SQL Developer installed on your machine

Source DB:

Oracle 12cR1 (12.1.0.2) running on CentOS 7.1

Destination DB:

Oracle 12cR1 running on AWS RDS Instance

High Level Steps to Migrate:

1. Create the destination Oracle 12CR1 instance on AWS. It is one of the easiest things to provision an Oracle DB on AWS RDS

2. Connect to Both Source(on-Prem) and Destination(AWS) Database from SQL Developer

3. Go to Tools > Database Copy and Select Source and Destination Databases

I prefer to do Tablespace Copy since most of the Apps i work reside in a single tablespace. But this depends on your choice. You can either chose Objects, Schemas or even entire Tablespaces to be copied across.

IMPORTANT : Make sure you have created the source schema in destination database before proceeding to next step else you will get an error “User does not exist”

In Destination AWS RDS run below commands

SQL> create user <source-schema-name> identified by <password123>;

SQL> grant dba to <source-schema-name>;

4. Start the Database Copy

5. Check from Performance Insights Console to Check whats happening in the background

6. Query the Destination Database to See if the Objects are valid and have arrived

SQL> select * from user_tables;

SQL> select * from dba_objects where status=’INVALID’;